LLM Optimizer: Marketing in the Age of AI Discovery

Your SEO might be perfect. To the AI that’s replacing Google for your customers, you might not exist.

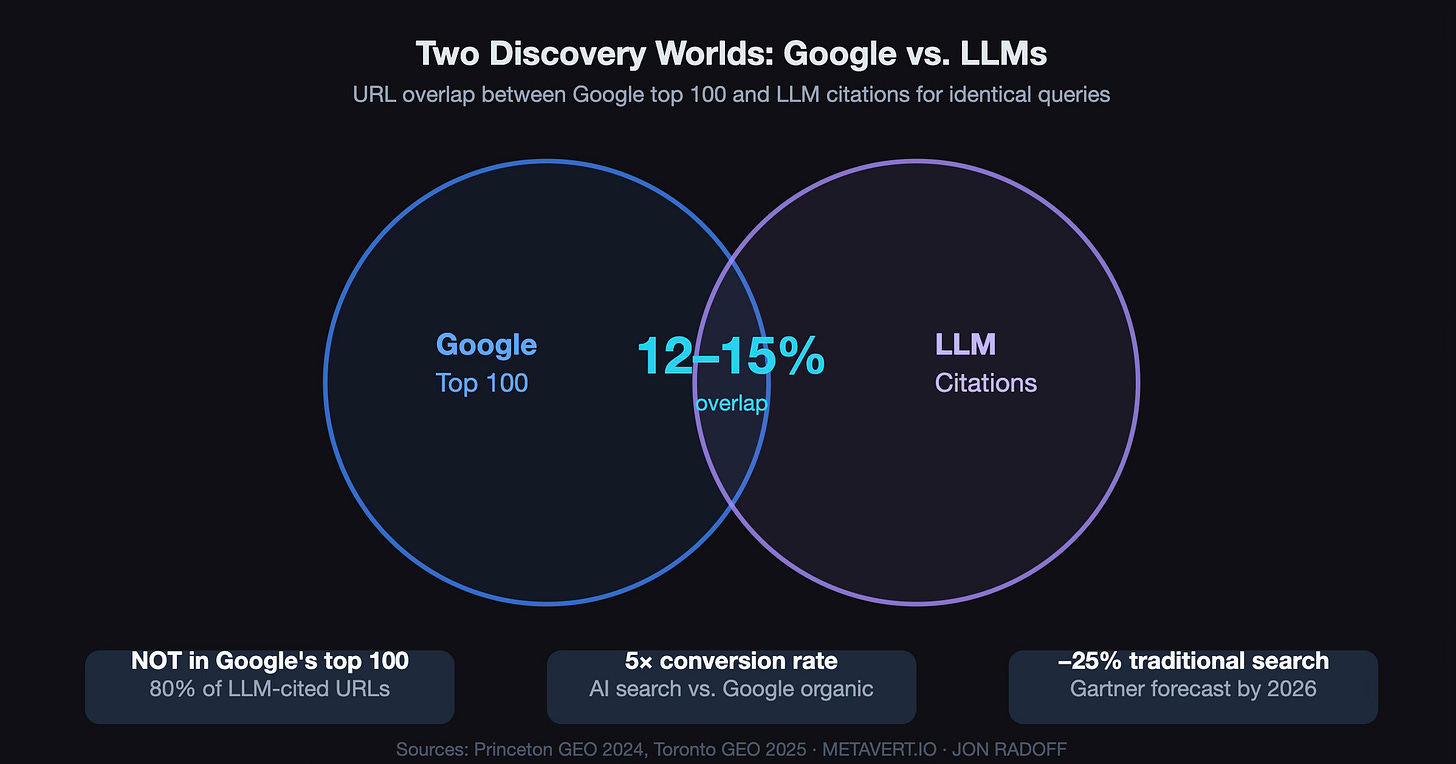

Here’s a number that should reframe how you think about marketing: 80% of the URLs cited by major LLMs don’t appear in Google’s top 100 results for the same queries. Not buried on page five. Not in the supplementary results. Not there at all.

You can be the undisputed SEO champion for your category and remain completely invisible to the systems that a growing share of consumers actually trust for recommendations. And that share is growing fast—58% of consumers now rely on AI for product recommendations, more than double the rate from two years ago.

I wrote about this dynamic in The Agentic Web: Discovery, Commerce, and Creation, where I described the structural shift from traditional search to LLM-mediated discovery—and how it connects to the larger transformation of commerce, software creation, and the open web. That article laid out the research. This one is about what to do about it.

As I wrote in the Agentic Web piece: when answers become applications: when discovery, evaluation, and action collapse into a single agentic flow—the question of whether an LLM recommends you becomes existential. It’s not just about visibility in a text response. It’s about whether your brand gets woven into the dynamic, composed experiences that agents build for users.

I’m sharing LLM Optimizer: a free, open-source AI visibility intelligence platform that measures how large language models perceive, cite, and recommend your brand, then gives you research-backed strategies to improve that visibility. It’s the tool I built to make the research actionable.

🔗 GitHub: github.com/jonradoff/llmopt 🔗 Hosted version: llmopt.metavert.io

The Problem: A Completely Different Signal Landscape

Traditional SEO optimizes for Google’s ranking algorithm: backlinks, domain authority, keyword density, page speed. The discipline emerging around AI visibility—called Generative Engine Optimization, or GEO—optimizes for something fundamentally different: the likelihood that a language model will cite, recommend, or surface your brand when answering a relevant question.

And the signals that drive LLM citations are only 12–18% correlated with traditional SEO signals. Domain authority and backlinks, the bedrock of SEO for two decades, barely register.

This isn’t a gradual evolution. It’s a parallel universe. Gartner forecasts a 25% drop in traditional search engine volume by 2026. AI search traffic converts at five times the rate of Google organic: 14.2% versus 2.8%. The people who find you through AI aren’t just browsing. They’re ready to act.

The question every marketer needs to answer is: are you visible in this new world?

What the Research Says

I’ve spent months synthesizing research from Princeton, Toronto, Ahrefs, and several other groups studying LLM citation behavior. The findings are striking — and they invert much of what the SEO industry has taught us for twenty years.

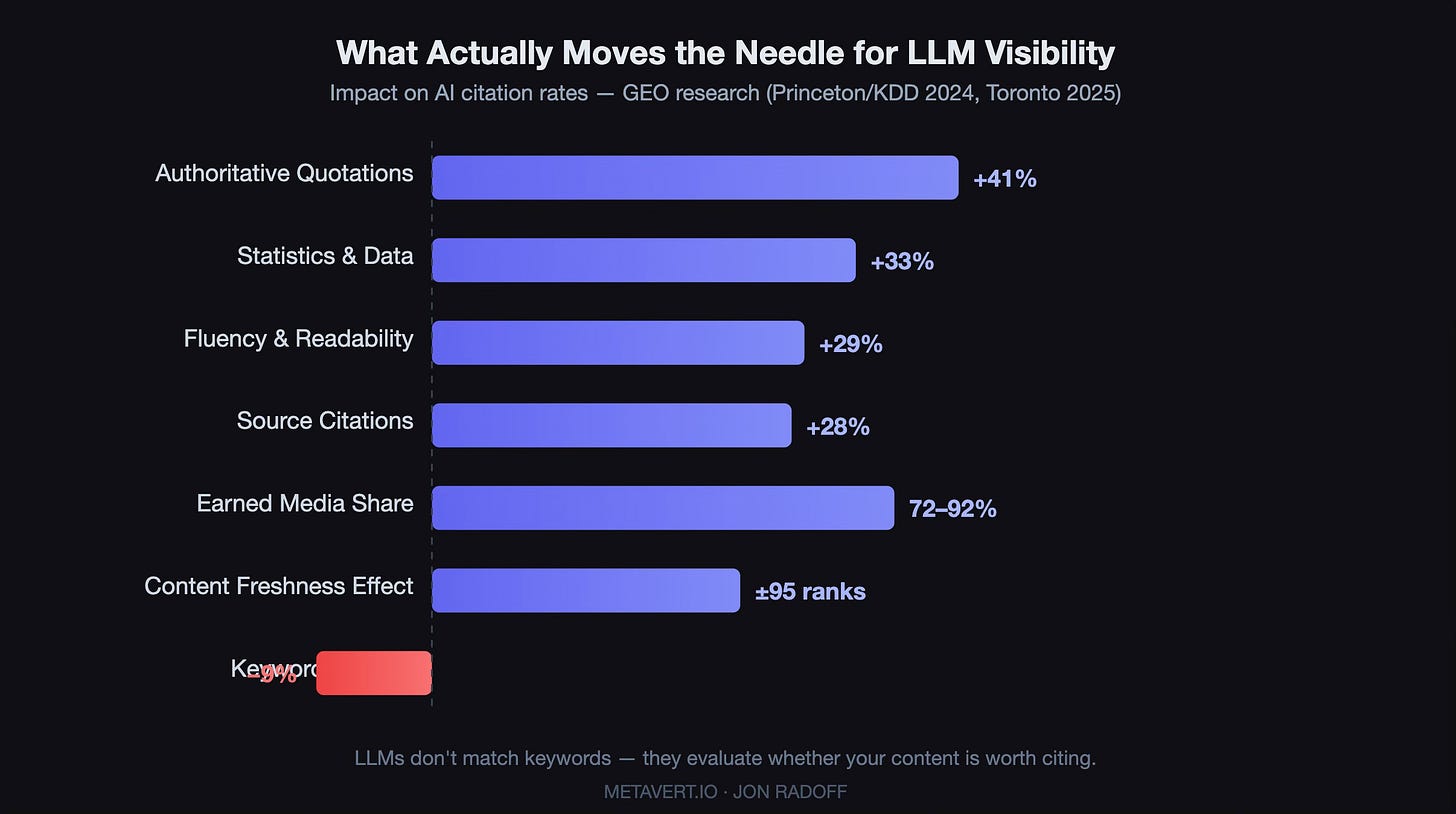

Quotability is king. Adding quotable statements from authoritative sources produces the single largest visibility improvement: +41% in LLM citations. Statistics provide +33%. Improving overall fluency and readability: +29%. Authoritative source references: +25%. Meanwhile, traditional keyword stuffing actually hurts your AI visibility by 9%. LLMs aren’t matching keywords; they’re evaluating whether your content is worth citing.

Earned media dominates. LLMs draw 72–92% of their citations from earned media: reviews, forum discussions, independent articles, YouTube videos. Only 18–27% comes from brand-owned content like your corporate website. This inverts the traditional content marketing playbook. In the LLM world, your reputation is shaped primarily by what others say about you.

YouTube is critical. YouTube appears in 16% of LLM answers: 40% more frequently than Reddit. And here’s the finding that surprised me most while building this: research shows that a 7-billion-parameter video model trained on high-quality YouTube transcripts outperformed a 72-billion-parameter model with lower-quality training data. The implication is stark: if your brand produces video content without accurate captions, you are invisible to the LLMs training on that content. No transcripts = no training signal = no recommendations. It’s a binary gate.

Freshness trumps authority. AI citations skew 25.7% newer than Google citations on average. Content freshness can shift AI recommendation positions by as many as 95 ranks. If you’re not publishing consistently, you’re fading from the AI’s memory.

Training data frequency compounds everything. The NanoKnow research (2026) found that LLM answer accuracy more than doubles as a brand’s training data frequency increases from rare (1–5 documents) to high-frequency (51+ documents). But the really interesting finding is the compounding effect: being present in training data and being retrievable via search provides a multiplicative advantage. This means the strategy isn’t just about optimizing your website — it’s about being present across enough sources that you cross the threshold where LLMs have internalized your brand as a concept worth recommending. Every earned media mention, every Reddit discussion, every YouTube video with good transcripts contributes to that critical mass.

The playing field has reset. Sites that rank fifth on Google see a +115% improvement from GEO optimization. Sites that rank first actually see a −30% effect — because the competitive landscape is completely reshuffled. For challenger brands and solopreneurs, this is extraordinarily good news. The democratization effect is real: the twenty-year head start that established brands built through SEO doesn’t transfer to the new world. The advantage goes to whoever understands these dynamics first.

Different LLMs, different realities. One finding that informed LLM Optimizer’s multi-provider testing: citation concentration varies dramatically across providers. The top 20 news sources capture between 28% and 67% of all AI citations, depending on which LLM you’re asking. Cross-family similarity between providers ranges from just 0.11 to 0.58. In plain English: being visible on ChatGPT tells you almost nothing about whether you’re visible on Claude or Gemini. You have to test across all of them.

What LLM Optimizer Does

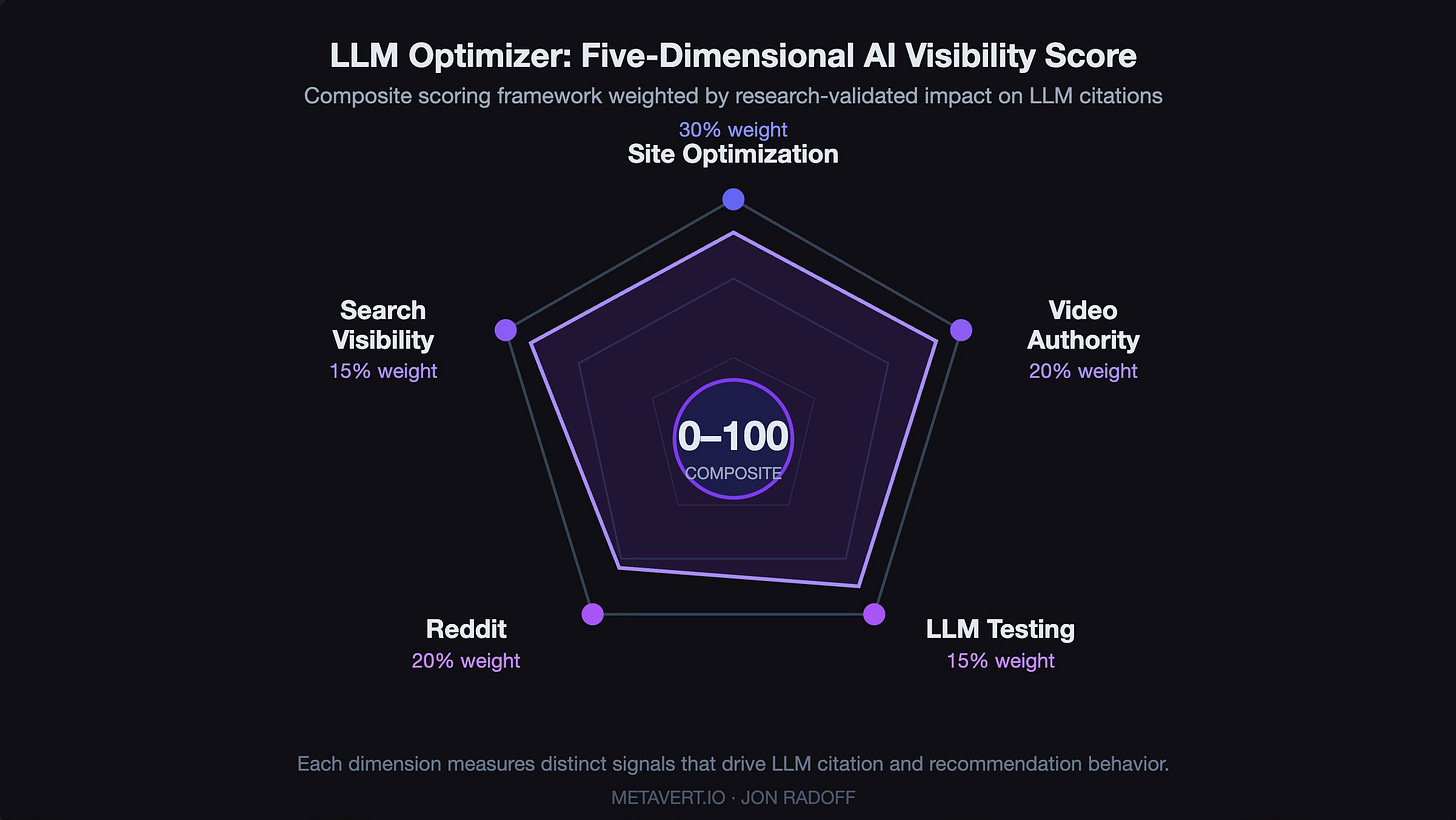

LLM Optimizer analyzes your brand across five dimensions, each grounded in the peer-reviewed research above, and produces a composite AI Visibility Score (0–100) with prioritized, actionable recommendations.

Answer Engine Optimization analyzes your website’s content against the strategies validated by the GEO research. It scores pages on quotation density, statistical evidence, source citations, fluency, structural optimization, and machine readability; it then produces per-page optimization scores with specific rewrite recommendations.

Video Authority Analysis performs a two-phase assessment of your YouTube presence. Phase 1 uses a fast model to assess individual videos for transcript quality, keyword alignment, and caption availability. Phase 2 feeds those assessments into a reasoning model for four-pillar scoring: Transcript Authority, Topical Dominance, Citation Network, and Brand Narrative.

Reddit Authority Analysis scrapes Reddit discussions mentioning your brand and analyzes community sentiment, competitive positioning, and training data signal strength. Because what your community says about you has 4–6× more influence on LLM recommendations than anything on your own website.

Search Visibility Analysis evaluates your site across both Google AI Overviews and standalone LLMs. It checks robots.txt AI crawler policies, structured data, content freshness, brand search momentum, and earned media signals.

LLM Knowledge Testing directly queries multiple LLM providers (Claude, ChatGPT, Gemini, and Grok) with your brand’s target queries and analyzes how each model responds. You can see exactly how each AI perceives your brand, compare your presence across providers, and run head-to-head competitor comparisons. Each LLM has substantially different citation behavior (cross-family similarity ranges from just 0.11 to 0.58), so testing across all four reveals blind spots you’d never find with a single provider.

All five dimensions aggregate into a Brand Intelligence report: a composite score weighted across Optimization (30%), Video Authority (20%), Reddit Authority (20%), Search Visibility (15%), and LLM Testing (15%). The weighting reflects the research: your site content is the foundation, but earned media signals (YouTube and Reddit) collectively outweigh it. The report generates prioritized action items that track through to completion — not vague advice like “improve your content,” but specific, evidence-based recommendations tied to the signals that actually move LLM citation behavior.

You can also generate PDF reports for sharing with stakeholders or clients: useful if you’re an agency or consultant helping brands navigate this transition.

Agentic by Design

If you’ve been following my open source projects, you know I’ve been building a set of open-source tools designed for the way software is actually being made and used right now: through conversations with AI agents. LightCMS manages my metavert.io through natural language. LastSaaS provides the SaaS infrastructure underneath. LLM Optimizer is the latest piece: I use it alongside LightCMS to analyze my own site’s AI visibility, identify gaps, then have agents implement the improvements directly. The whole workflow—analysis, recommendations, implementation—happens through conversation.

LLM Optimizer includes a full MCP server (Model Context Protocol, with OAuth 2.1 and Streamable HTTP transport) so AI assistants like Claude can access your analysis data, visibility scores, and action items programmatically. A marketer using Cowork or Claude Desktop can connect to LLM Optimizer and work through the to-do list: “What’s my current visibility score? What should I improve first? Draft the content changes.” The tool becomes part of the agent’s workflow rather than a separate dashboard you have to remember to check.

This is what I mean when I talk about the agentic web. The answer isn’t just a page of text anymore — it’s a dynamic experience that acts on your behalf. Your marketing tools should work the same way.

How to Use It

Bring your own API keys. At minimum, you’ll need an Anthropic API key; it powers the analysis engine. For the full picture, add OpenAI, Gemini, and Grok keys so you can test your visibility across all four major LLMs and see how each one perceives your brand differently. A YouTube Data API key unlocks the video authority analysis.

You have two options:

Use the hosted version at llmopt.metavert.io. Bring your API keys, pay a small subscription fee to support development and hosting, and you’re running analyses in minutes.

Self-host it. Clone the repo from GitHub, set up MongoDB, add your API keys, and run it yourself. It’s MIT licensed: no restrictions, no catch. The backend is Go, the frontend is React 19 with TypeScript, and it deploys cleanly on Fly.io or anywhere else you host containers.

git clone https://github.com/jonradoff/llmopt.git

cd llmopt && cp .env.dev.example .env

# Add your API keys to .env

cd backend && go build -o llmopt . && ./llmoptThe README walks through every step for both standalone and SaaS deployment modes.

For the technical folks: the backend is Go 1.24 with a clean LLM provider abstraction that supports Anthropic, OpenAI, Gemini, and Grok through a common interface. Each analysis streams results via SSE so you see progress in real time. The frontend is React 19 with TypeScript and Tailwind. MongoDB for storage. Cloudflare WARP integrated as a SOCKS5 proxy fallback for Reddit scraping. The codebase is structured the same way I structure all my recent projects — consistent patterns, clear separation of concerns, designed so that AI coding agents can navigate and extend it fluently. Fork it and make it yours.

The Bigger Picture

The shift I described in The Agentic Web is accelerating. Discovery is migrating from search engines to language models. Commerce is following. The signals that determine whether you get recommended are fundamentally different from the signals you’ve been optimizing for.

This is a rare moment where the competitive landscape genuinely resets. The incumbents who dominated Google don’t automatically dominate AI recommendations. The SEO playbook that worked for twenty years doesn’t transfer. The advantage goes to whoever understands the new rules first and adapts their content strategy accordingly.

LLM Optimizer is my attempt to make that adaptation measurable and actionable — grounded in the best available research, open-source so anyone can use and extend it, and designed for the agentic workflows that are becoming the default way we build and market.

I’ve been saying for a while that the constraint for today’s entrepreneur isn’t engineering capability: it’s imagination.

The same principle applies to marketing in the age of AI discovery. The constraint isn’t budget or team size. It’s understanding the new rules of the game. The research exists. The tools exist. The question is whether you act on them before your competitors do.

The research and scoring methodology that inform this tool are documented in detail in the LLM Visibility Research Summary. I’d encourage anyone navigating this shift to dig in. The more people who understand these dynamics, the better the ecosystem gets for everyone building on the open web.

The playing field has reset. Time to play.

Further Reading

The Agentic Web: Discovery, Commerce, and Creation: The full research deep-dive on LLM-mediated discovery, agentic commerce, and web-native creation — Part 3 of the Web Renaissance series.

Software’s Creator Era Has Arrived: How AI agents are democratizing software development — the thesis behind why solo founders can now build tools like this.

I Built a CMS for the Age of Agents: LightCMS — the AI-native content management system I use alongside LLM Optimizer.

The Last SaaS Boilerplate: LastSaaS — the open-source SaaS foundation that LLM Optimizer is built on.

Enshittification and the Future of AI Agents: The platform decay cycle and why open-source, agent-native tools are the escape hatch.

Tools and Research

🔗 LLM Optimizer on GitHub — MIT licensed, free

🔗 LLM Optimizer hosted — Bring your API keys

📄 GEO: Generative Engine Optimization (Princeton/KDD 2024)

📄 NanoKnow (2026) — Training data frequency and LLM accuracy

📄 AI Search Arena (2025) — Cross-provider citation analysis