The Agentic Web: Discovery, Commerce, and Creation

When answers become applications, the open web wins

Part 3 of the Web Renaissance series. Reading part 1 (the Great Rebundling) and part 2 (WebGPU, WebAssembly) are optional.

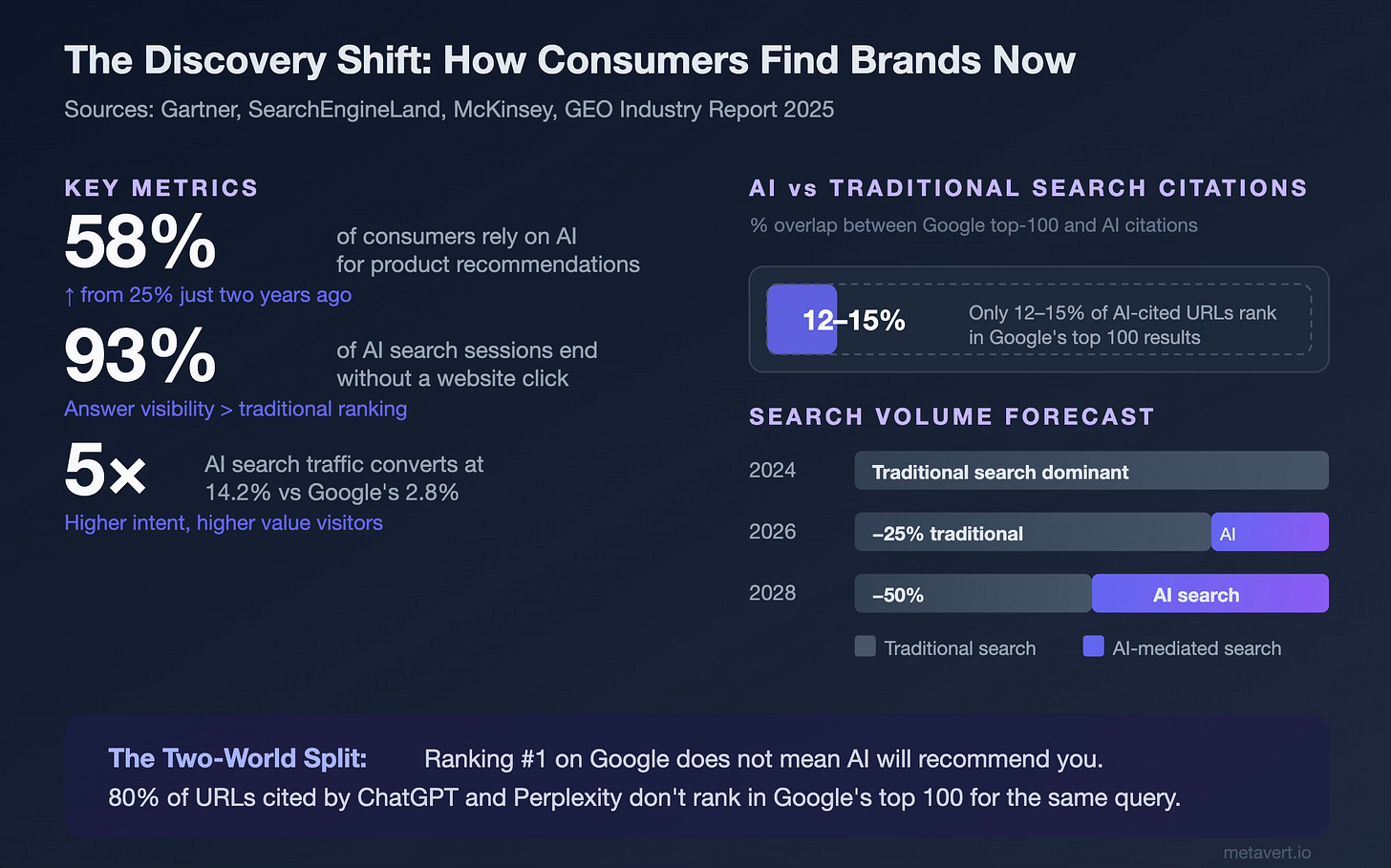

Here’s a question that should keep every marketer and product manager awake at night: if 58% of consumers now rely on AI for product recommendations—more than double the rate from two years ago—and 93% of those AI search sessions end without a single click to a website… who controls the top of your funnel?

Not Google. Not anymore; at least, not exclusively.

The answer is increasingly: large language models. ChatGPT, Perplexity, Claude, Gemini. These systems don’t show you ten blue links. They answer.

But even that word—“answer”—already undersells what’s happening. These systems don’t just produce text anymore. They use tools. They browse, they synthesize, they compose. Ask Perplexity Computer to help you evaluate running shoes and it doesn’t just list options: it builds you an interactive comparison dashboard, pulls real-time pricing, and links to purchase. Ask Claude to analyze your market positioning and it generates a live application with charts you can manipulate.

The “answer” has become an experience that you interact with dynamically.

And this is the thread I want you to hold onto as you read this article: the unit of interaction on the web is shifting from pages to applications, from static content to dynamic composition, from things you read to things that act on your behalf.

Multimodality used to mean text plus images plus audio. Now it means answers that take the form of tools, dashboards, agents, and transactions. The agentic web doesn’t just serve information. It synthesizes, composes, and acts. It does so on a substrate that is uniquely suited to this kind of dynamic creation: the open web.

When I spoke with Aravind Srinivas back in 2023—before Perplexity had become a household name—he described a clear arc: we went from libraries to Google to answer engines, each step eliminating a layer of manual labor between the question and the knowledge. He called what Perplexity was building “dynamic personalized Wikipedia pages.” But even that framing assumed the answer would still be a page. What’s happened since is that the answer broke free of the page entirely. It became an application, a tool, an agent that acts.

This is the world I hinted at two years ago in Part 1 and Part 2 of this series, when I wrote about the Great Rebundling and how the web could eat software. I described a future where LLMs reorganized discovery away from ad-driven intermediaries, where WebGPU closed the performance gap between browsers and native apps, and where the web reasserted itself as the open, composable platform it was always meant to be.

It took a while for me to finally write this Part 3. But the wait was worth it: because the changes that have landed in the last two years are not incremental. They’re structural. Three forces have converged—a revolution in how people discover brands and products through AI, a new payments infrastructure built for agents to transact on behalf of humans (and each other), and a dramatic expansion of who can build for the web and what they can build. Together, they amount to something I can only describe as the agentic web.

The Quick WebGPU Update

First, a loose end from Part 2. When I wrote about WebGPU in 2024, it was a Chrome-only affair—impressive in potential, limited in reach. The question was whether Firefox and Safari would follow.

They did—mostly. As of late 2025, WebGPU ships by default in Chrome, Edge, Firefox (since version 141 in July 2025), and Safari on macOS Tahoe and visionOS. Apple announced full WebGPU support in Safari 26, including iOS 26 and iPadOS 26, which means mobile Safari is finally getting GPU-accelerated web capabilities; though as of this writing, iOS 26 is still in beta and hasn’t shipped to the general public. Apple being Apple, the mobile rollout is happening on their timeline, not ours. But the commitment is real: WebGPU supersedes WebGL on all Apple platforms, mapping directly to Metal. Babylon.js, Three.js, Unity, PlayCanvas, TensorFlow.js, and ONNX Runtime all work in Safari 26 beta. When iOS 26 ships broadly later this year, WebGPU will have effectively universal desktop and mobile browser coverage.

The significance of this is not just that browsers can render prettier graphics. It’s that one of desktop software’s last remaining advantages (high-performance GPU access for 3D rendering, AI inference, and immersive experiences) is now accessible through a URL. No download, no install, no app store. We’ll return to why this matters when we talk about web development later.

The New Top of the Funnel

But first, let me introduce the force that I think matters most for anyone reading this who builds products, runs a business, or markets anything online.

The way people discover things has fundamentally changed.

In Part 1, I described how search evolved from web directories to Google to LLM-powered answer engines, each transition driven by the same principle: whoever gives you better answers faster wins. That prediction has played out more aggressively than even I expected. Gartner now forecasts a 25% drop in traditional search engine volume by 2026, and AI search traffic converts at five times the rate of Google organic — 14.2% versus 2.8%. That’s not a rounding error. That’s a structural shift in the economics of attention.

What makes this especially interesting is that AI-powered discovery operates on an almost entirely different signal landscape than traditional search. Research from Princeton and Toronto shows only a 12–15% overlap between URLs that rank in Google’s top 100 and those cited by ChatGPT or Perplexity for the same queries. Eighty percent of the URLs cited by major LLMs don’t appear in Google’s top 100 at all. You can be the undisputed SEO champion for your category and remain completely invisible to the systems that a growing share of consumers actually trust for recommendations.

I’ll go much deeper on what to do about this later in this article. For now, understand that this is the new reality of the top of the funnel: LLMs are the gatekeepers, and they play by different rules.

Commerce Breaks Free

Before we talk about agents buying things, let’s talk about the structural shift that made it possible: the migration of digital commerce away from app stores and toward the open web.

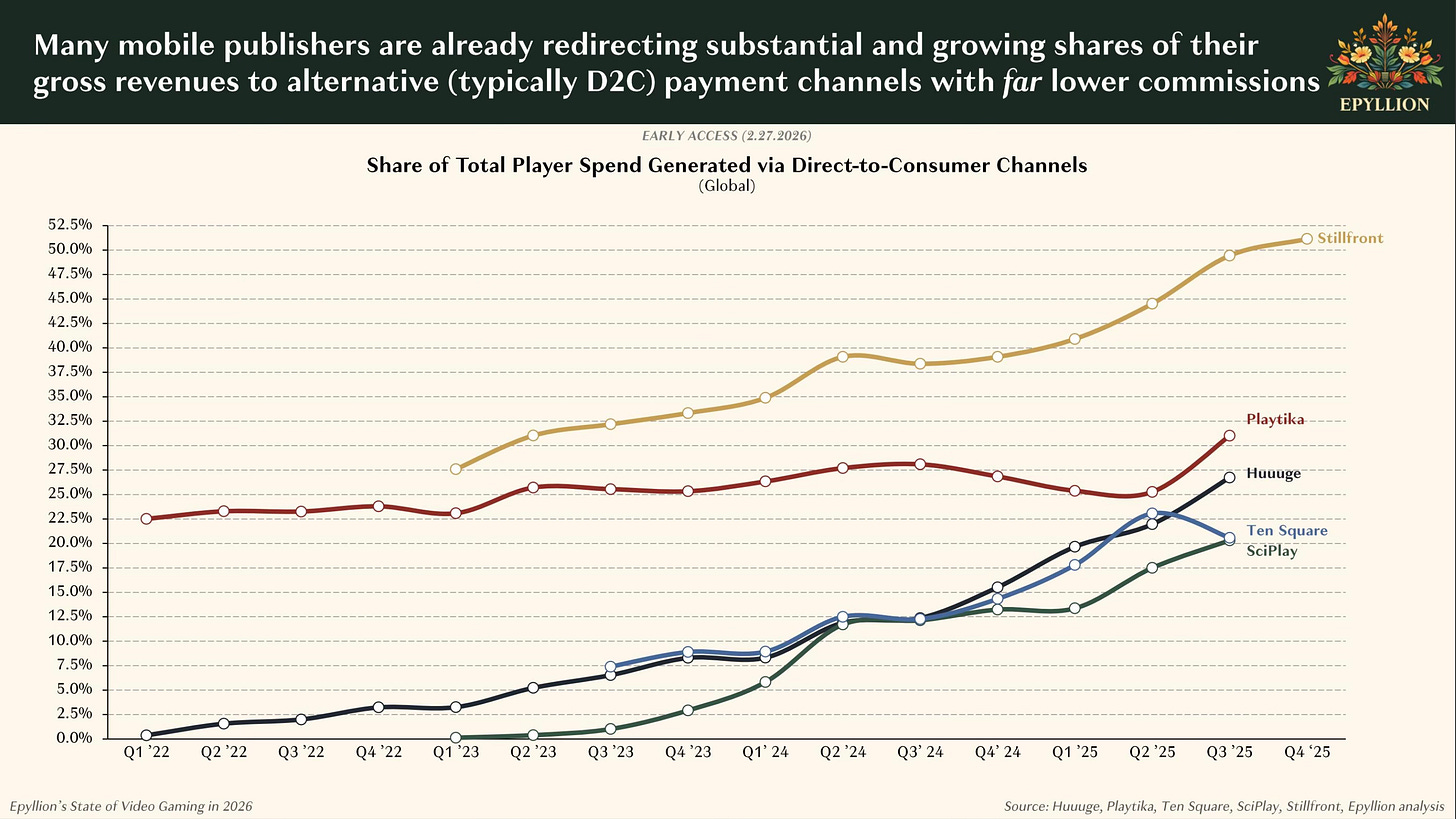

The numbers here are striking and immediate. According to Matthew Ball’s State of Video Gaming in 2026 report, some top game publishers now generate 30–50% of player spend through direct-to-consumer channels.

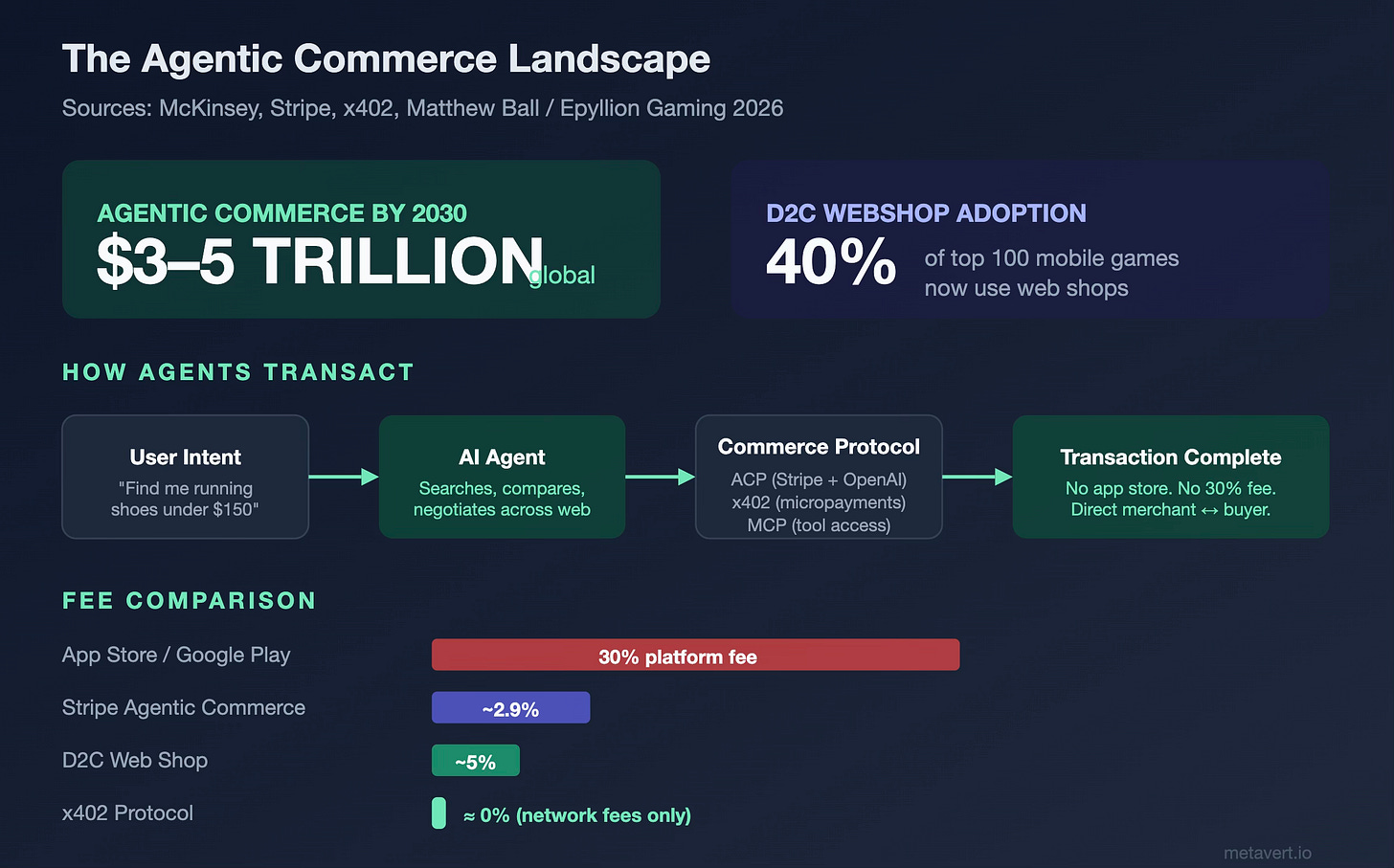

Forty percent of the top 100 mobile games use web shops. The economics are obvious: most webshop providers charge roughly 5% of revenue versus the 30% extracted by Apple and Google. Epic Games launched its webshop infrastructure in mid-2025, offering developers a 0% revenue share on their first million dollars per app, and only 12% above that. Xsolla, a longstanding payments platform for games, has built dedicated mobile webshop tooling that lets developers stand up their own storefronts.

The legal landscape accelerated this. Court rulings in the United States (most notably the litigation between Epic and Apple) have opened the door for developers to direct users to out-of-app purchase options on iOS. The European Union’s Digital Markets Act pushed even further. These aren’t abstract policy debates; they’re the “why now” behind a wave of webshop adoption that has shifted from experimental to strategic.

This resonates with something I highlighted in Enshittification and the Future of AI Agents: the three-stage decay of platforms that attract users, habituate them, then extract from them. App stores followed this playbook perfectly. The web is the escape hatch. And now agents are driving through it, which brings us to the bigger story.

It’s worth noting that not every creator-friendly platform is purely open web. Roblox (which launches from browsers) paid creators over $1 billion in 2025, and now reaches 144 million daily active users, operates more like a walled garden built on web-native principles. But Roblox is being pulled into the agentic orbit: they recently shipped an open-source MCP server (Model Context Protocol, which I expand on below) that lets Claude modify Roblox experiences directly from natural language prompts. When even the platforms that could stay closed are adopting open agentic protocols, it tells you something about the direction of gravity.

Agentic Commerce: When Agents Start Buying

If the “answer” is becoming an application—dynamic, composable experience rather than a block of text—then commerce is the natural next frontier. Discovery, evaluation, and purchase collapse into a single agentic flow. An agent doesn’t just recommend running shoes; it builds you a comparison tool, surfaces real-time inventory, and completes the transaction.

The question isn’t whether AI agents will start purchasing on behalf of users. It’s how fast the infrastructure can keep up.

Faster than you’d think. McKinsey estimates that AI agents could mediate $3 to $5 trillion in global consumer commerce by 2030, with as much as $1 trillion in the U.S. alone.

Tooling has already arrived: OpenClaw—the open-source personal AI agent that exploded to over 145,000 GitHub stars within two weeks of its January 2026 launch—has been called the “Napster moment” for agentic e-commerce. Users are having it plan trips end-to-end: it checks loyalty points, digs up a corporate rate it found buried in an old PDF in your email, books the flight, and completes payment with a virtual card. Others have it generating sorted grocery lists from recipe screenshots, comparing prices across local stores, and placing the order. It runs locally, it’s MIT-licensed, and the community extends its skill library daily.

Then there’s Perplexity Computer, launched in February 2026, which orchestrates 19 models in parallel and connects to hundreds of third-party services—Gmail, Slack, Notion, GitHub, Jira, FactSet—functioning as what many are calling the mass-market successor to OpenClaw’s developer-centric approach.2 These aren’t demos. These are people composing entire purchase workflows.

Discovery, evaluation, transaction.

…into a single agentic flow, exactly the answers-as-applications pattern playing out in commerce.

The Protocol Stack

The payments infrastructure is keeping pace. The Agentic Commerce Protocol (ACP), co-developed by Stripe and OpenAI, allows users to complete purchases within ChatGPT without ever leaving the conversation. Businesses connect their product catalogs to Stripe’s agentic commerce suite, which creates agent-readable endpoints with real-time product, pricing, and availability data. When an agent finds what you’re looking for, it generates a Shared Payment Token that is scoped to a specific seller, bounded by time and amount, and monitored by Stripe’s fraud detection. Major brands like URBN, Etsy, Coach, and Ashley Furniture are already onboarding.

Then there’s x402, which takes a more radical path: baking payments directly into HTTP itself. When a client (human or agent) requests a paid resource, the server responds with HTTP status code 402 (the “Payment Required” status that has existed in the spec since the early web but was never implemented). The client pays using stablecoins, instantly and with essentially zero fees. No accounts, no API keys, no subscriptions. Just money moving at the speed of a web request.4

Parallel to both sits a layer that’s easy to overlook but critical to the whole stack: Anthropic’s Model Context Protocol (MCP). If ACP and x402 define how agents pay, MCP defines how agents connect—to merchant systems, payment processors, inventory databases, enterprise backends. Anthropic open-sourced it in late 2024, it now has over 10,000 active servers and 97 million monthly SDK downloads, and in December 2025 they donated it to the Linux Foundation’s Agentic AI Foundation, co-founded with Block and OpenAI. To see what this looks like in practice: Worldpay built their agentic payments integration directly on MCP. Walmart built a “Super Agent” architecture on MCP where a customer can say “plan a unicorn-themed birthday party for 8 kids” and the agent pulls from party supplies, bakery, and invitations simultaneously: a single composed experience spanning multiple inventory systems. Visa and Mastercard have launched their own agentic AI payment tools. The infrastructure is real.

It’s worth noting that MCP isn’t the only connectivity protocol in play. Google’s A2A (Agent-to-Agent) protocol handles inter-agent collaboration, and there’s a growing ecosystem of complementary standards. But MCP has won the adoption race so far — 10,000+ servers versus roughly 50 A2A partners, largely because it solved the most immediate problem first: giving agents a standardized way to access tools and data, the equivalent of a USB-C port for AI applications. As even Sam Altman put it when OpenAI announced MCP support: “People love MCP.” The protocols are complementary, not competitive: MCP for tool access, A2A for cross-organizational collaboration, but MCP’s head start matters.5

As I wrote in The Age of Machine Societies, autonomous agents are already demonstrating genuine cooperative and competitive behaviors — collaborating to solve complex tasks, forming alliances, even launching their own token economies. Tools like OpenClaw and Perplexity Computer are making agent-mediated action accessible to ordinary users; ACP and x402 are making agent-mediated transactions possible at scale; and MCP provides the connective tissue that lets agents actually navigate merchant infrastructure. Together, they’re building the commerce layer that machine societies will transact on.1

The Web Is Getting Easier to Build

There’s a reason the web is winning this battle, and it’s not just that the fees are lower. It’s that the web is becoming dramatically easier to build for. The tools doing the building are overwhelmingly targeting the web as their default output surface.

Remember that shift from answers-as-text to answers-as-applications? This is where it becomes concrete. When an LLM’s response to your question is a fully functional web app, the web isn’t just a distribution channel: it’s the medium of thought for AI systems.

Consider the numbers: 41% of all code written globally is now AI-generated. Ninety-two percent of U.S. developers use AI coding tools daily. Twenty-one percent of Y Combinator’s Winter 2025 startups shipped codebases that are 91% or more AI-generated. The vibe coding platform market hit $4.7 billion and is projected to reach $12.3 billion by 2027.

And what are all these tools building? Web apps. HTML, React, and Node are the default output targets for virtually every agentic coding platform: from Cursor and Bolt to Claude Code and Perplexity Computer. Perplexity Computer is especially interesting here: it orchestrates 19 models in parallel, has connectors to hundreds of web-native data providers, and outputs fully functional web applications. When I used it to create an agentic marketer for LastSaaS—and separately, a real-time investments dashboard with thesis analysis and scenario simulation—I described what I wanted in plain language and got back fully functional web applications: interactive charts, real-time data connectors, responsive layouts. No build step, no compilation, no App Store review. Just URLs. These weren’t “answers” in any traditional sense; they were bespoke software applications, composed on the fly, deployed to the web instantly. That’s the new multimodality: not text plus images, but answers that take the form of interactive tools.

This is a point I’ve made before — first in Semantic Programming and Software 2.0, and more recently in Software’s Creator Era Has Arrived, where I argued that software is undergoing the same Pioneer→Engineering→Creator transition that transformed media, music, and games. Language models are becoming a compiler for natural language. What’s happened since is that this compiler got much better, and the output format it prefers is overwhelmingly web-native. The web is the universal deployment target because it requires no gatekeepers, no installation, and no platform-specific adaptation. An agent can generate a complete application and make it available to anyone with a browser in a single step.

And here’s where it comes together: the same agentic layer that makes software easier to build is the one that diminishes the gravitational pull of incumbent platforms. Yes, Gmail and Google Calendar probably only get stronger in a world where AI agents orchestrate everything: they’re deeply embedded data providers with robust APIs.

What erodes is the bundling advantage: the lock-in that kept users inside a single vendor’s ecosystem because switching costs were too high and interoperability too painful. When agents can seamlessly weave together data from any web-native source—pulling your calendar from Google, your tasks from Notion, your email from wherever, your analytics from a dashboard you built last Tuesday—the value shifts from the platforms that hoard your data to the agents that orchestrate it. As I detailed in Composability Is the Most Powerful Creative Force in the Universe, the most powerful systems emerge from interoperable primitives. The open web is the ultimate composable substrate. That’s a web renaissance by any definition.

With WebGPU now shipping across all major browsers, even the last stronghold of desktop-only software—high-performance 3D graphics and GPU-accelerated computation—is accessible through the browser. As game engines and creative tools adopt WebGPU, the case for requiring a download shrinks further. The web won’t replace AAA game engines overnight, but the trajectory is clear: more and more of what required a native application can be delivered through a URL.

The Deep Dive: Winning in an LLM-First World

Now let me return to what I promised: a deeper look at what the shift to LLM-mediated discovery actually means for how you build, market, and grow a business on the agentic web. And keep in mind the thread we’ve been following: when answers are applications—when discovery, evaluation, and action collapse into a single agentic flow—the question of whether an LLM recommends you becomes existential. It’s not just about visibility in a text response. It’s about whether your brand gets woven into the dynamic, composed experiences that agents build for users.

The discipline emerging around this is called Generative Engine Optimization (GEO) and it represents a genuinely new category of marketing practice. Not SEO (search engine optimization) with a new acronym. A fundamentally different optimization problem.

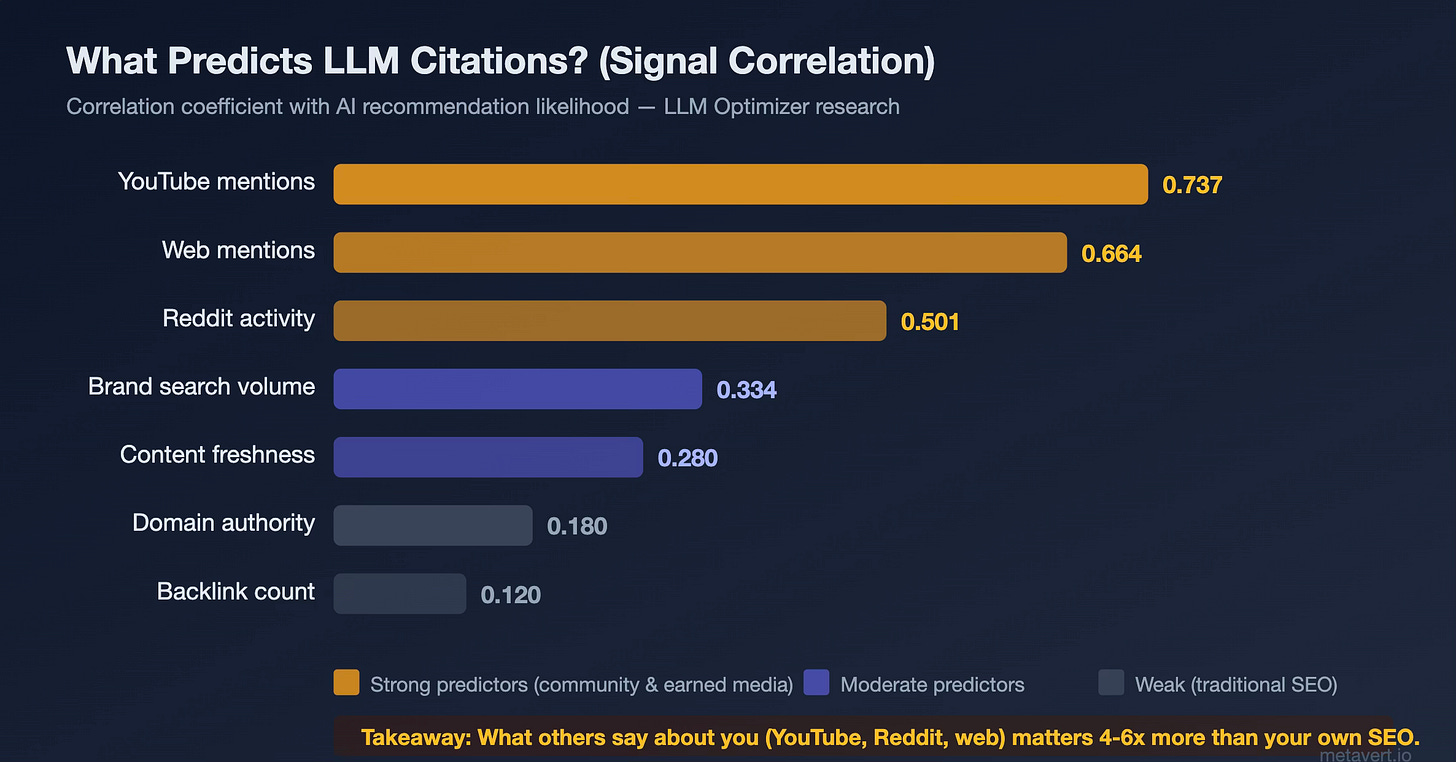

Here’s why. Traditional SEO optimizes for Google’s ranking algorithm: backlinks, domain authority, keyword density, page speed. GEO optimizes for something different entirely: the likelihood that a language model will cite, recommend, or surface your brand when answering a relevant question. And the signals that drive LLM citations are only 12–18% correlated with traditional SEO signals. Domain authority and backlinks, the bedrock of SEO for two decades, barely register.

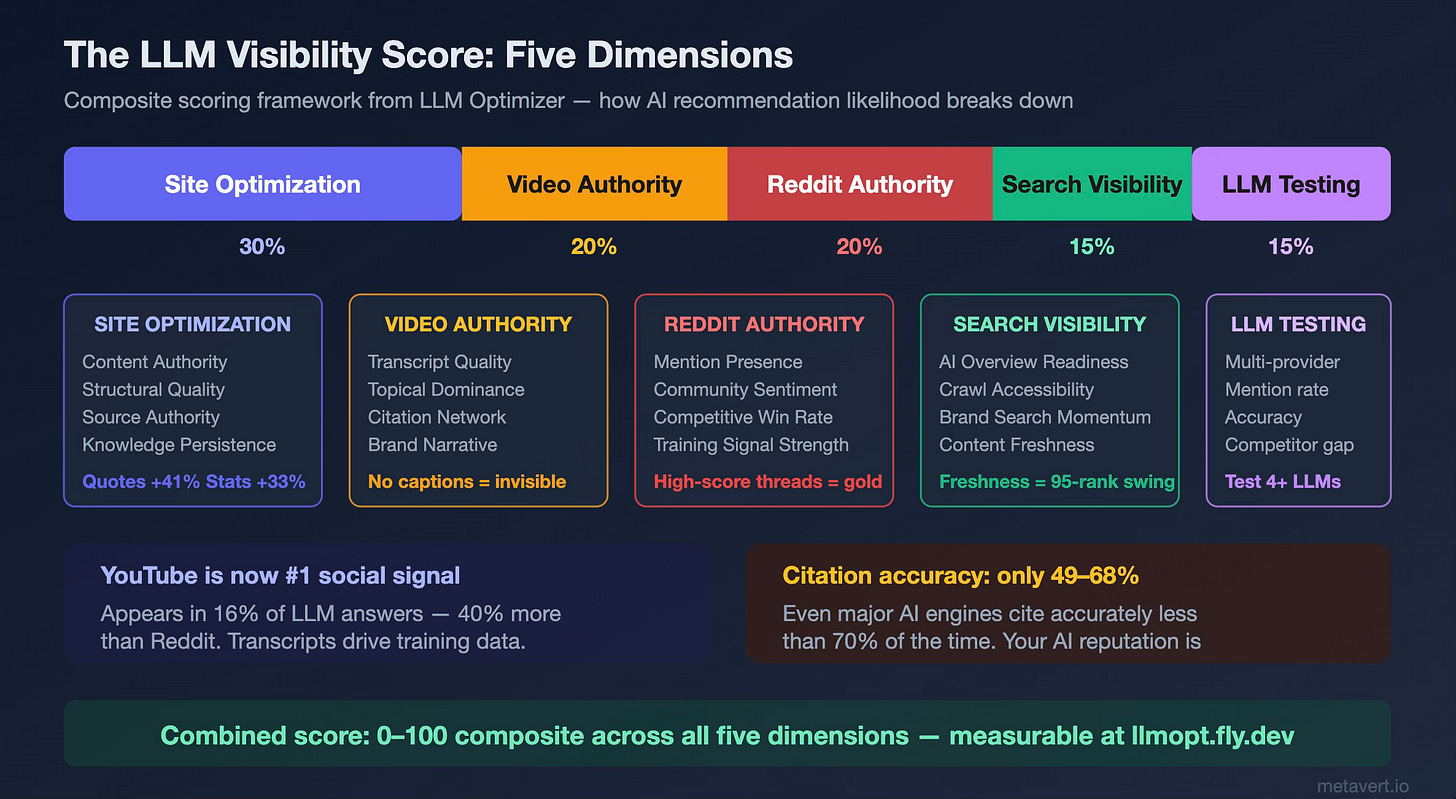

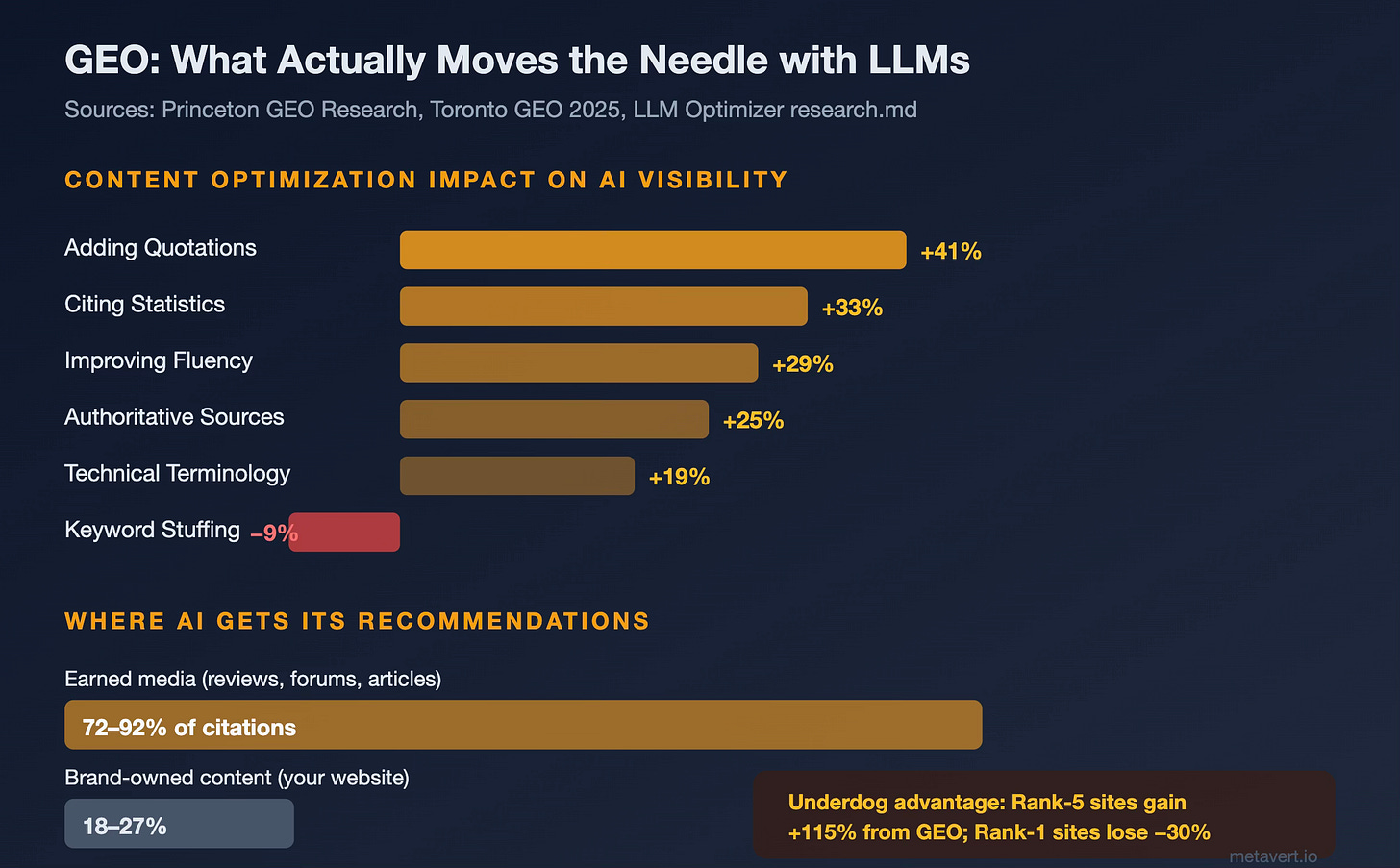

What does matter? Research from Princeton’s GEO study, the Toronto GEO 2025 paper, and my own work building LLM Optimizer (an open-source project I’m testing to improve brand visibility across AI discovery channels) tells a clear story:

Quotability is king. Adding quotable statements from authoritative sources produces the single largest visibility improvement: +41% in LLM citations. Statistics provide +33%. Improving overall fluency and readability: +29%. Authoritative source references: +25%. Meanwhile, traditional keyword stuffing — the junk-food of SEO — actually hurts your AI visibility by 9%. LLMs aren’t matching keywords; they’re evaluating whether your content is worth citing.

Earned media dominates. LLMs draw 72–92% of their citations from earned media: reviews, forum discussions, independent articles, YouTube videos. Only 18–27% comes from brand-owned content like your corporate website. This inverts the traditional content marketing playbook, where you control the narrative on your own domain. In the LLM world, your reputation is shaped primarily by what others say about you — especially on platforms like YouTube (which appears in 16% of LLM answers, 40% more frequently than Reddit) and community forums.

The democratization effect is real. Sites that rank fifth on Google see a +115% improvement from GEO optimization. Sites that rank first actually see a −30% effect — because the competitive landscape is completely reshuffled. For challenger brands and solopreneurs, this is extraordinarily good news: the playing field has reset, and the advantage goes to those who understand the new rules first.

Freshness matters more than authority. AI citations skew 25.7% newer than Google citations on average. Content freshness changes can shift AI recommendation positions by as many as 95 ranks. If you’re not publishing consistently, you’re fading from the AI’s memory.

I’ve been hacking together LLM Optimizer to make these dynamics measurable and actionable. It’s an app I’m currently testing with early users that analyzes your brand across five dimensions: site content optimization, video authority (YouTube), Reddit presence, search visibility, and direct LLM testing across Claude, ChatGPT, Gemini, and Grok—and produces a composite visibility score with specific, evidence-based recommendations.3

The five-dimensional framework behind LLM Optimizer’s composite visibility score.

It’s in active development and I’d encourage anyone navigating this shift to take a look. I’ll have a more detailed writeup on the tool and its methodology soon — the research and scoring framework I’ve built into it informed much of this article’s analysis.

The Agentic Web

Step back and look at what’s happening in aggregate.

An answer used to be a page of text. Then it became a page of links. Then a snippet at the top of a search result. Now it’s becoming something entirely different: a dynamically composed experience: part information, part application, part agent. It doesn’t just tell you something but does something. It builds you a dashboard. It compares prices across vendors and completes the purchase. It generates a tool you can interact with, share, and build upon.

And where does this happen? On the web. Not in an app store. Not inside a walled garden. On the open, composable, URL-addressable web: the only medium flexible enough to serve as both the canvas and the runtime for this kind of dynamic creation.

The protocols agents transact on (ACP, x402, MCP) are web-native. The software they build targets the browser by default. The content they cite follows a different logic than the platform algorithms we’ve spent two decades optimizing for. The GPU capabilities that once kept high-end experiences locked inside native apps are now available through a URL. The web isn’t just surviving the agentic era. It’s the substrate of the agentic era.

What I described in Part 1 as the Great Rebundling is now playing out across every dimension of online activity. The intermediaries haven’t disappeared; they’ve changed form.

Instead of Google organizing the world’s information behind a wall of ads, or Apple extracting rent on every digital transaction through a locked-down app store, we’re seeing a new architecture emerge: one where AI agents navigate the open web on your behalf, compose services from whatever sources serve you best, and transact using protocols that don’t extract 30% for the privilege of existing.

For entrepreneurs, solopreneurs, and product builders, the implications are practical and immediate. Your marketing funnel now starts with whether an LLM recommends you, and increasingly, whether it weaves your product into the applications it composes for users. Optimize for that. Your distribution strategy should favor the web over app stores wherever possible: the economics are better and the barriers to entry are lower.

Your development velocity should take advantage of agentic tools that can spin up web applications from natural language descriptions. And your content strategy should prioritize earning citations from the sources that LLMs actually trust: community discussions, independent reviews, expert commentary, and YouTube.

The web was always supposed to be open, composable, and accessible. For a long time, the dominant platforms pulled it in the opposite direction: toward walled gardens, toward rent-seeking intermediaries, toward the enshittification cycle.

The agentic web is a correction. Not a utopia; there are real concerns about AI accuracy, about the concentration of power in foundation model providers, about security in multi-agent systems. But the direction is unmistakable: agents prefer the open web because it’s the medium that lets them compose freely. And as they compose, they’re transforming not just the web but the very nature of what it means to search, to discover, to build, and to transact online.

The answer is no longer a page. It’s an experience. And the web is where experiences get made.

The renaissance continues.

Further Reading

This series:

Web Renaissance, Part 1: The Great Rebundling

Web Renaissance, Part 2: How the Web Eats Software

Related articles by me:

The State of AI Agents in 2026: 200+ slide research deck on agentic engineering

Software’s Creator Era Has Arrived: how AI agents are democratizing software development

Semantic Programming and Software 2.0: language models as compilers for natural language

Composability Is the Most Powerful Creative Force in the Universe: why interoperable primitives beat monolithic solutions

The Age of Machine Societies: autonomous agents collaborating, competing, and transacting

Enshittification and the Future of AI Agents: the platform decay cycle and the agentic escape hatch

Tools and technology:

LLM Optimizer: Analyze and improve your brand’s AI discovery visibility

Stripe Agentic Commerce Suite — Infrastructure for agent-mediated transactions

x402 Protocol — Internet-native micropayments via HTTP 402

Agentic Commerce Protocol (ACP) — Open spec for agent-to-merchant commerce

Model Context Protocol (MCP) — The connective tissue for agentic systems

OpenClaw — Open-source personal AI agentPerplexity Computer — Multi-model agentic platform

Jon Radoff interviews Aravind Srinivas (2023) — The conversation that prefigured much of this article

Key research sources cited:

GEO: Generative Engine Optimization (Princeton, 2024), a foundational paper on optimizing content for LLM citation

NanoKnow (2026): how training data frequency predicts LLM answer accuracy

1 The complementarity between ACP and x402 mirrors a pattern I explored in Composability Is the Most Powerful Creative Force in the Universe: the most powerful systems are built from interoperable primitives rather than monolithic solutions. Payments on the agentic web will likely use both protocols depending on the transaction type, just as the web itself uses HTTP, WebSockets, and WebRTC for different communication patterns.

2 The contrast between OpenClaw and Perplexity Computer is instructive. OpenClaw is developer-first: open source, local-first, extensible through community skills, running on your machine with full system access. Perplexity Computer is consumer-first: hosted, managed, 19-model orchestration, hundreds of pre-built connectors, $200/month. This mirrors the classic open-vs-managed split in every infrastructure category (and both are winning, because the real competitor is doing nothing). Galaxy Research estimates that agent-mediated transactions could represent a meaningful fraction of all internet commerce within five years, and that’s a large enough market for many approaches to coexist.

3 One finding from building LLM Optimizer that surprised me: YouTube transcripts are disproportionately powerful for LLM training. Research shows that 7-billion-parameter video models trained on high-quality YouTube transcripts outperform 72-billion-parameter models with lower-quality training data. The implication is stark — if your brand produces video content without accurate captions, you are invisible to the LLMs that are training on that content. No transcripts = no training signal = no recommendations. It’s a binary gate that most brands haven’t yet grasped.

4 The HTTP 402 “Payment Required” status code has existed in the HTTP specification since 1997 and reserved for future use but never implemented. It took nearly three decades and the arrival of autonomous AI agents to finally give it a purpose. There’s something poetic about the web’s original architects leaving a placeholder for machine-native payments, and a generation of AI engineers finally filling it in. For more on x402’s technical architecture, see the x402 protocol documentation and Galaxy’s analysis of its implications for AI agent economies.

5 The protocol landscape for agentic systems is worth watching closely. MCP (tool access), A2A (agent-to-agent collaboration), ACP (commerce transactions), and Google’s UCP (Universal Commerce Protocol) each address different layers of the stack. What’s interesting is that they’re converging on complementary roles rather than competing head-to-head, much like how HTTP, TCP, and DNS each handle different layers of the web itself. MCP’s early dominance mirrors how USB-C won: solve the most common connection problem first, then let the ecosystem build on top. The fact that Anthropic donated MCP to the Linux Foundation (with OpenAI, Google, Microsoft, and AWS as supporters) signals that this is infrastructure, not product strategy.