ShaderVine: A WebGPU Shader Editor Built for the Agentic Era

GPU shaders, compute simulations, and why creative tools need MCP

When I built Threelab a few weeks ago, I wrote about the modality gap: the frustrating bottleneck of directing visual work through text. I described how adjusting the way particles trail through a flow field felt like directing a painter by writing letters instead of pointing at the canvas.

That observation nagged at me. So I built something to push further into it.

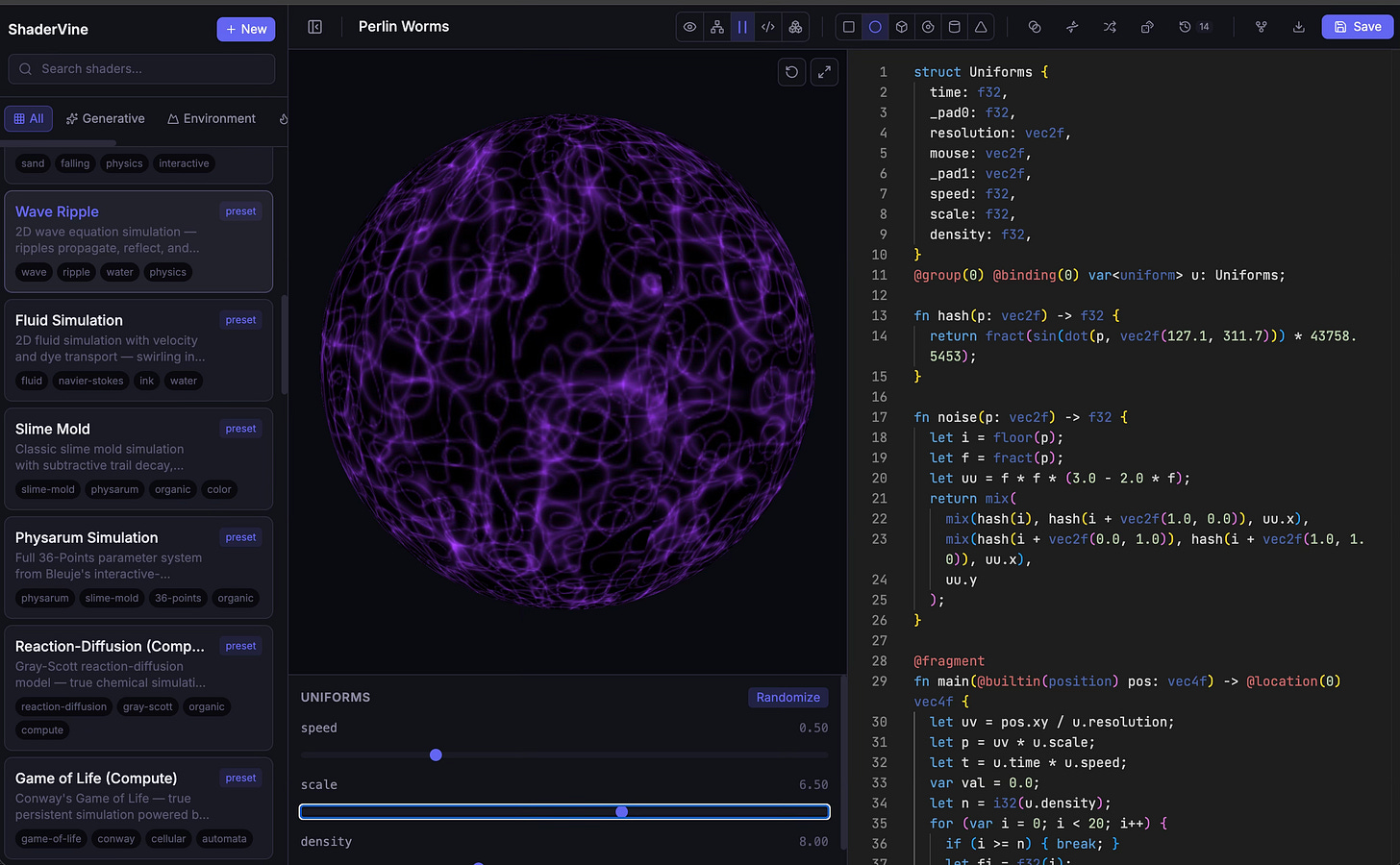

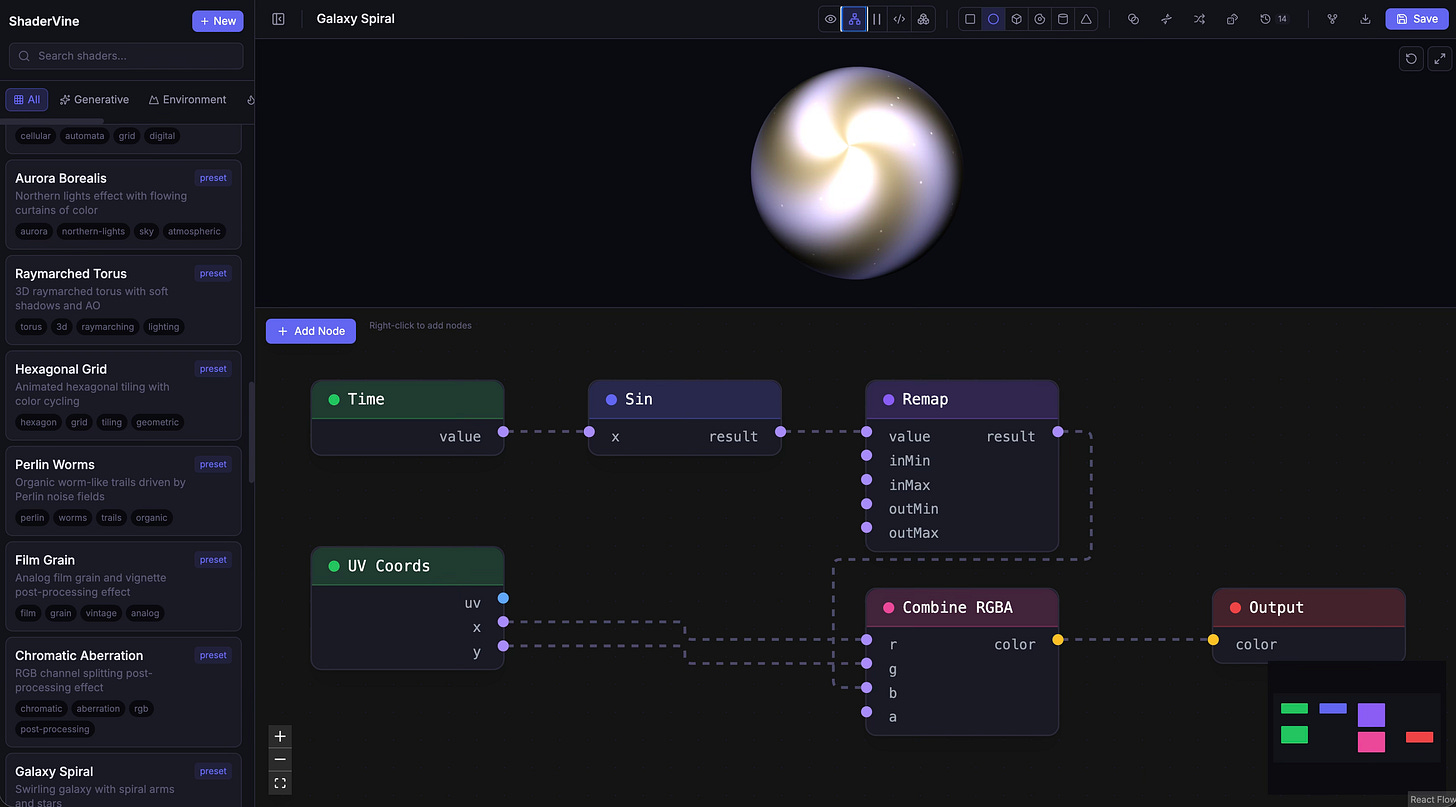

ShaderVine is a browser-based WebGPU shader programming toolkit. It’s a Monaco-powered WGSL editor with live preview, 16 GPU compute simulations, a genetic evolution system for shaders, visual morph transitions, a node-graph editor, and export targets for Unity, Unreal, Blender, Three.js, and raw HLSL. It runs entirely in the browser, requires no installation, and is open-source under MIT.

But the part I’m most interested in talking about isn’t the feature list. It’s the design philosophy: I built ShaderVine specifically for the agentic era—architected to be forked and maintained with Claude Code, and with a full MCP server that makes every creative operation controllable by AI agents.

WebGPU Is Delivering

When I wrote about WebGPU in my Web Renaissance piece in early 2024, it was Chrome-only and speculative. In my Agentic Web piece earlier this year, I reported that Firefox and Safari had shipped support, with iOS still in beta.

ShaderVine gave me the chance to stress-test WebGPU not just for rendering but for compute—running GPU simulations with ping-pong buffer architectures, particle systems writing to storage buffers, reaction-diffusion calculations at thousands of cells per frame. The verdict: it works. Not “works with caveats.” It works the way you’d want a modern GPU API to work. I’m running fluid simulations, physarum slime mold, erosion modeling, particle swarms, and Turing pattern generation—all in WGSL compute shaders, all in the browser, all at interactive framerates.

This is the promise I was writing about two years ago, now real. One of desktop software’s last remaining advantages—high-performance GPU compute—is accessible through a URL.

Why a Shader Editor

Shaders sit at a peculiar intersection of art and engineering. They’re programs that run on the GPU, but the output is purely visual—color, light, motion, pattern. A shader programmer thinks simultaneously in math and aesthetics: this sine function produces that ripple, this noise octave creates that texture, this distance field carves that shape.

This makes shader development a fascinating test case for agentic tools. The code is compact (a fragment shader might be 50 lines), the output is immediately visible, and the parameter space is enormous. Changing a single float from 0.3 to 0.7 might transform a gentle wave into an aggressive distortion.

ShaderVine ships 16 built-in compute simulations: Conway’s Game of Life, reaction-diffusion, physarum transport networks, fluid dynamics, falling sand, erosion, particle swarms with trails, magnetic field visualization, diffusion-limited aggregation, domain warping, turbulence, and more. Each runs as a WebGPU compute shader with a ping-pong buffer architecture—two storage textures alternating as input and output each frame, the GPU doing all the heavy lifting.

Designed for Agents

Here’s where ShaderVine differs from the shader editors that already exist. Shadertoy, The Book of Shaders, ISF Editor—those tools were built for humans using browsers (and they’re great tools, quite a bit more advanced than what ShaderVine can initially do—but ShaderVine is built to evolve rapidly).

ShaderVine was built for a world where AI agents are collaborators in the creative process. Concretely, this means three things.

MCP as a first-class citizen. ShaderVine includes a full MCP server (built on mcp-go) that exposes shader operations as callable tools. An agent can search the gallery, create a new shader, fork an existing one, modify code, adjust uniforms, trigger evolution, export to any target format—all programmatically. The MCP server isn’t an afterthought bolted onto a human-facing tool. It’s a parallel interface designed so that an agent working through Claude Code or any MCP-compatible client can do everything a human can do in the browser UI. This is the pattern I described in my Agentic Web piece: software designed from the ground up to be operated by AI, not just used by humans through a GUI.

Fork-friendly architecture. The codebase—React 19, TypeScript, Vite, Go backend with MongoDB—is designed to be maintainable by Claude Code. Clean module boundaries, typed interfaces throughout, Docker containerization that a coding agent can build and deploy without human intervention, and documentation written as much for an agent reader as a human one.

Direct manipulation alongside generation. This is the lesson from Threelab I carried forward most deliberately. Text-based prompting is a lossy channel for visual work. ShaderVine addresses this with interactive uniform sliders, mouse input passed as built-in uniforms, and two creative evolution tools that don’t require text at all: shader morphing(crossfade between two shaders to explore the interpolation space) and genetic evolution (generate mutated variants, select the ones you like, breed them). An agent handles the generative heavy lifting; a human handles the aesthetic judgment; the tool provides interfaces native to each modality.

Export: From Browser to Engine

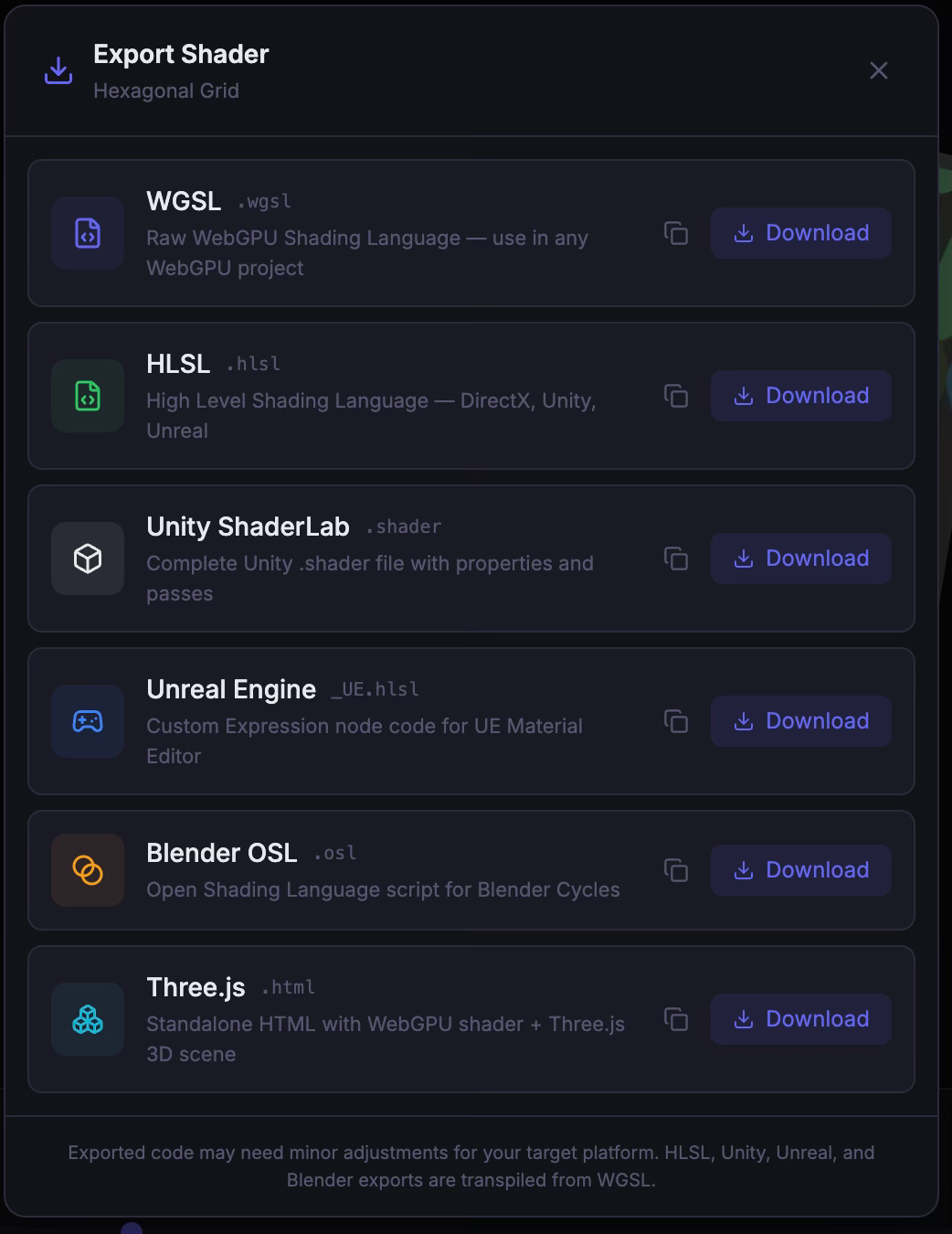

Any shader—whether hand-written, evolved, or morphed—can be exported to six targets: raw WGSL, HLSL, Unity ShaderLab, Unreal Engine Custom Expression nodes, Blender OSL, and Three.js as a standalone HTML scene.

The game engine exports matter because shader development in Unity and Unreal often involves a painful compile-wait-check-adjust cycle. ShaderVine’s live preview with instant recompilation means you can iterate on visual logic in the browser at interactive speed, then export to your engine of choice when you’re satisfied. It’s not a replacement for engine-native shader tools, but for the creative exploration phase—where you’re searching for the right effect before committing to an engine-specific implementation—it removes real friction.

The Three.js export follows the same composability principle from Threelab: a single self-contained HTML file that runs anywhere a browser runs. No dependencies, no build step. An agent can create a shader through the MCP tools, evolve it, and export a standalone web artifact without a human touching the interface.

What I Learned

The honest observations.

Compute shaders are where WebGPU’s advantage over WebGL becomes visceral. WebGL had no compute shader support at all—you had to fake GPU compute by encoding data into texture pixels and running fragment shaders. WebGPU’s native compute pipeline with storage buffers, workgroup shared memory, and atomic operations is a qualitative leap. The ping-pong buffer pattern powering ShaderVine’s simulations is clean, efficient, and obvious in a way the WebGL hacks never were.

The text bottleneck is narrower here than I feared. Shaders are an interesting counterpoint to my Threelab experience. Because shader code is compact and the mapping from code to visual output is relatively direct, the feedback loop with an AI agent is tighter than it was for complex 3D scene composition. The challenge shifts from “I can’t describe what I want” to “I want to explore variations faster than I can articulate them”—which is exactly the problem the evolution and morphing tools address.

MCP design is becoming a craft. Having now built MCP servers for Threelab, a CMS, a chess platform, and ShaderVine, I’ve started developing intuitions about what makes a good agent-facing API. The tools need to be composable, discoverable (clear names a model can reason about), and bounded.

The Bigger Picture

ShaderVine is a small but concrete data point that agentic coding will accelerate the direct-from-imagination era.

GPU compute shaders—one of the most technically demanding areas of graphics programming—are now accessible through a browser, editable with AI assistance, and evolvable through direct visual manipulation. The barrier to entry for creating sophisticated visual effects has dropped by an order of magnitude.

The Creator Era isn’t limited to web apps and SaaS products. It extends into the visual, the spatial, the aesthetic. When a solo developer can prototype a fluid simulation in the browser on a Saturday morning, evolve it through genetic selection over lunch, and export it to Unreal in the afternoon—that’s a fundamentally different creative reality than the one that existed a year ago.

The tools are getting better. The protocols are maturing. The web, once again, is the canvas.

Further Reading

When AI Learns to Paint: Three.js and Claude: The Threelab project that preceded ShaderVine, and the modality gap observations that motivated it

The Agentic Web: Discovery, Commerce, and Creation: The broader thesis on MCP, agentic protocols, and why the open web is the substrate of the agentic era

Web Renaissance, Part 2: How the Web Could Eat Software: The 2024 piece on WebGPU, WebAssembly, and the possibilities for games on the web

Software’s Creator Era Has Arrived: The Pioneer → Engineering → Creator framework for understanding how AI is transforming software development

The State of AI Agents in 2026: The full landscape report on agents, MCP adoption, and the agentic economy