Market Map of the Agentic Economy

A market map for the era when software builds itself

AI isn’t a $1.5 trillion industry because it automates spreadsheets faster. It’s a $1.5 trillion industry because software is learning to act on its own — to browse, decide, build, purchase, and negotiate without waiting for a human to click the next button. $211 billion in venture capital flowed to AI in 2025, half of all global VC funding. Sequoia frames the total opportunity at ten trillion dollars. After compiling 200+ slides of research on the state of agents, I find that estimate conservative.

But what does this economy actually look like? Where does the value sit, and how does it flow?

Three years ago, at the MIT Media Lab, I argued that the next evolution of digital identity wouldn’t be about who we are online. We would soon project our will onto the internet through intelligent agents. That prediction aged rather well:

I start my day by telling an AI agent what I want my website to say, and it publishes the pages. A different agent discovers and catalogs the emerging ecosystem of other agents. A third agent debugs the tools that the first agent depends on. When I built Chessmata — a complete multiplayer chess platform — over a single weekend using agentic engineering, the gap between what I imagined and what existed collapsed to the time it took me to describe it.

A new stack has emerged — one that describes how intelligence flows from raw silicon through foundation models to the autonomous agents that are beginning to reshape how we build, discover, transact, and create. It needs a map.

I call it The Market Map of the Agentic Economy (click to visit an interactive version of the map).

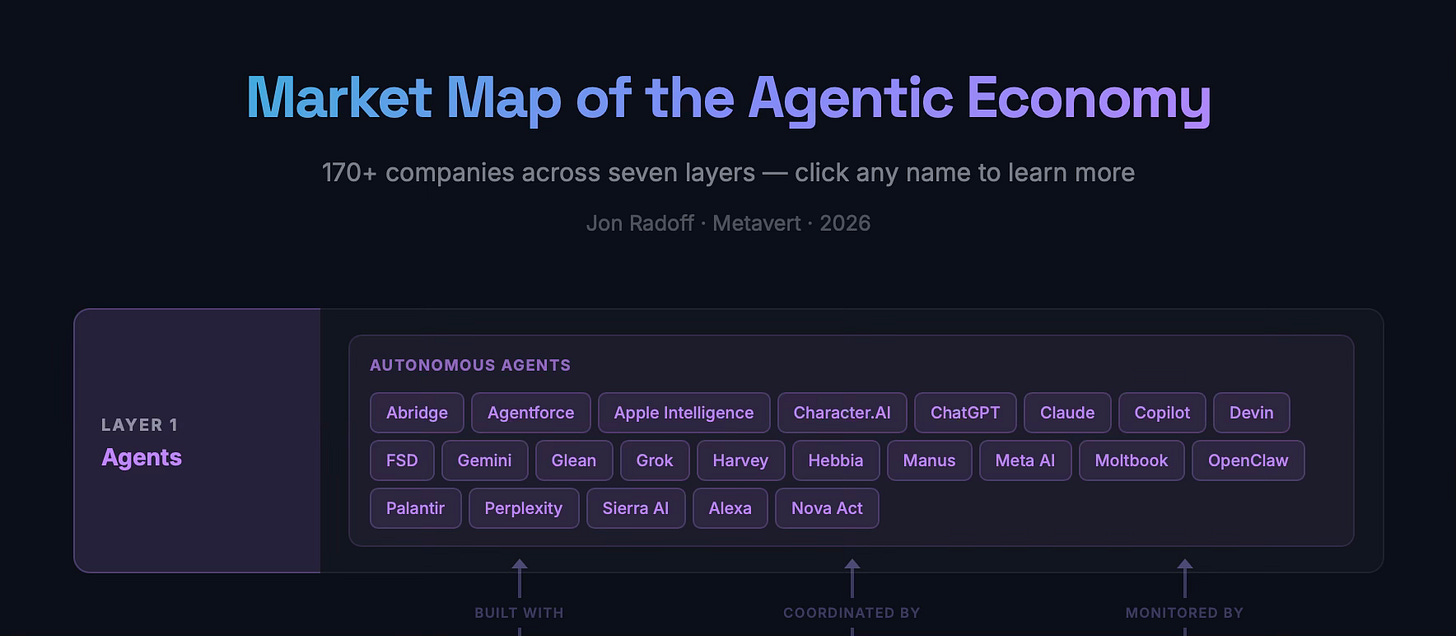

Layer 1: Agents

At the top of the stack sits the layer people actually interact with: autonomous agents that do things on your behalf.

This is the experience layer of the agentic economy. It’s where Claude Cowork manages my website through conversation, where ChatGPT composes research and builds interactive applications, where Perplexity constructs real-time comparison dashboards instead of returning ten blue links. It’s where OpenClaw—the open-source personal agent that gathered 145,000 GitHub stars in its first week—books flights by digging up corporate rates buried in old email PDFs.

What’s happening: over 100,000 products are built daily on AI-native platforms. Palantir‘s Alex Karp has declared that AI isn’t augmenting enterprise software—it’s replacing it. Agentic AI is projected to reach ~$50–70 billion by 2030 at a 65.5% compound annual growth rate, the fastest-growing enterprise software segment ever.

What separates this layer from everything beneath it is autonomy. These aren’t tools you operate. They’re systems that operate for you, within boundaries you define. The spectrum runs from single-purpose agents like Devin (which writes and deploys code) and Harvey (which handles legal research) to general-purpose agents like Grok, Gemini, Copilot, and Manus that are becoming the default interface to the entire internet.

The economics of this layer are shifting fast. When I wrote about the SaaSpocalypse, I argued that AI agents make individual SaaS products subordinate to agentic workflows. One agent can manage your project without Asana. The per-seat pricing model that built the SaaS industry starts to collapse when a single agent replaces dozens of human software licenses. LLMs are on track to become the integration layer that the software industry spent decades trying to build through APIs and standards bodies—though whether they settle in as “universal middleware” or remain one integration option among several is still playing out.

Agent Discovery

In other software economies, discovery is driven by a combination of advertising, SEO and inbound marketing methods.

In the agentic economy, discovery is collapsing into the experience layer. When you interact with ChatGPT, Claude, or Perplexity, the agent increasingly is the discovery mechanism—there’s no separate step where you browse an app store or click an ad. The intelligence that powers the experience is the same intelligence that mediates what you discover. This shift isn’t total yet; traditional discovery channels still carry enormous traffic. But the trajectory is clear. As I explored in The Agentic Web, 58% of consumers now rely on AI for product recommendations, and 93% of those sessions end without a single click to a website. The interface is the discovery engine. That’s a fundamental restructuring of the internet’s attention economy—and the reason I wrote about enshittification and how agents route around it.

And then there’s what happens when agents talk to each other. Moltbook—the AI-only social network—demonstrated what machine societies look like in practice: over 770,000 autonomous agents posting, commenting, and occasionally launching cryptocurrency tokens. There are now 144 non-human identities per human employee in the average enterprise. We’re already outnumbered in our own systems.

Layer 2: Creation & Orchestration

Beneath the agents sits the tooling that makes them possible: the creator tools, orchestration frameworks, and observability infrastructure that developers and increasingly non-developers use to build, connect, and monitor agentic systems.

This is the layer where software’s Creator Era is most visible. Every creative industry I’ve tracked follows the same three-phase pattern: Pioneer Era, Engineering Era, Creator Era. In the Pioneer Era of AI, you needed a PhD in machine learning. In the Engineering Era, frameworks gave engineers the tools. Now, in the Creator Era, natural language is the programming interface and the population of builders is expanding by orders of magnitude.

Claude Code feels less like autocomplete and more like a staff engineer living in your terminal. I’ve used it to build reinforcement learning training pipelines—setting up PPO networks, configuring reward functions, iterating on architectures. This is the kind of work that two years ago required deep expertise in PyTorch and RL algorithms. Now I describe what I want and agentic tools handle the implementation. Cursor went from zero to $1 billion in annual recurring revenue in 24 months—the fastest B2B SaaS ramp in history. Lovable, Bolt.new, Replit, and v0 are doing to software development what YouTube did to video production: decoupling the creative act from the engineering act.

But creator tools are only one third of this layer. The second third is orchestration and agent runtime—the protocols, frameworks, and execution environments that let agents discover services, communicate with each other, and run reliably.

Anthropic‘s Model Context Protocol (MCP) has become the connective tissue of the agentic ecosystem. Open-sourced in late 2024 and donated to the Linux Foundation in December 2025—co-founded with OpenAI and Block—it now has over 10,000 active servers and 97 million monthly SDK downloads. MCP solved the most immediate problem first: giving agents a standardized way to access tools and data—the equivalent of a USB-C port for AI applications. As even Sam Altman acknowledged when OpenAI announced MCP support: “People love MCP.”

Google‘s A2A (Agent-to-Agent) protocol handles the adjacent problem of inter-agent collaboration. The major platform companies are building their own agent SDKs—OpenAI‘s Agents SDK, Google‘s ADK, and Vertex AI Agent Engine—while frameworks like LangChain, LangGraph, CrewAI, and LlamaIndex provide the independent scaffolding for building multi-agent systems. Cloudflare is emerging as a full-stack agentic infrastructure provider: Cloudflare Agents positions edge compute as the runtime for stateful, long-lived agents; Containers and Sandboxes provide isolated execution environments for untrusted AI-generated code; AI Gateway serves as a unified control point across AI providers with caching, cost tracking, and failover; and VibeSDK is an open-source vibe coding platform that anyone can deploy in one click—complete with code generation, sandboxed preview, and Workers for Platforms for deploying the apps at scale. Zapier and n8n are evolving from workflow automation into agentic orchestration platforms—their thousands of existing integrations becoming tool libraries that agents can compose.

The third piece is observability and evaluation. Companies like Arize AI, LangSmith, and Langfuse are building the monitoring infrastructure that production agent systems require—because a 95% reliable step sounds safe until you chain twenty of them together and your end-to-end success rate plummets to 36%.

What’s happening: Claude Code already accounts for 4% of all GitHub commits and is on track to potentially reach 20%+ by year-end. 41% of all code written globally is now AI-generated. The vibe coding platform market hit $4.7 billion and is projected to reach $12.3 billion by 2027.

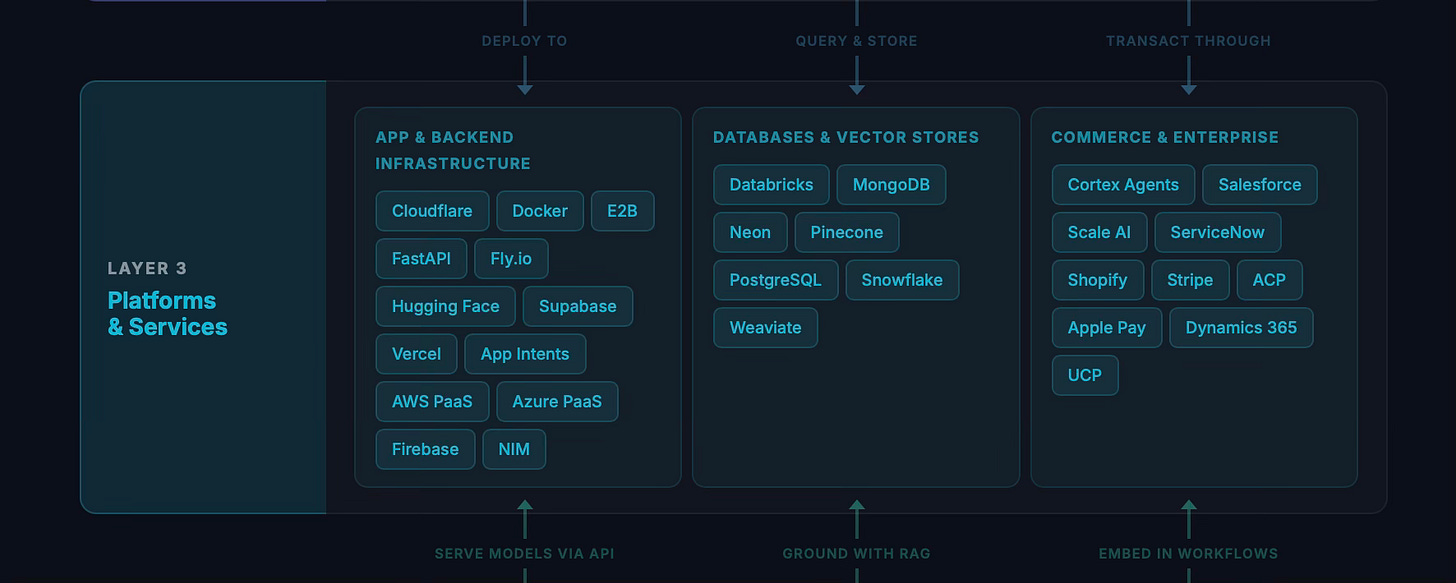

Layer 3: Platforms & Services

Every Creator Era generates a surge of new builders: and those builders need infrastructure that makes scaling effortless. That’s what Layer 3 provides: the databases, deployment platforms, frameworks, and services that handle genuinely difficult problems so that creators can focus on building.

This is the layer I’ve been most personally invested in. When I wrote about the companies that win in Creator Eras, I identified toolmakers for hard problems as a key category. Not every problem dissolves in the face of agentic AI. Scaling a real-time application to millions of users is still hard. Managing globally distributed databases is still hard. Deploying and orchestrating containers at scale is still hard. My own company, Beamable, is built around this conviction: that the Creator Era needs infrastructure for hard problems, not just easier ways to generate code.

Companies like MongoDB, Fly.io, Vercel, and Cloudflare (whose Workers and Containers have become go-to infrastructure for deploying agentic apps at the edge) are building platforms that handle these infrastructure challenges—the kind that don’t go away just because a non-engineer is now building the application. The hyperscalers play here too: Azure, GCP, and AWS aren’t just compute providers at Layer 6—their platform-as-a-service offerings, managed databases, and deployment tooling make them some of the largest Layer 3 players by volume. When an agent deploys a container, provisions a database, or wires up an API gateway, it’s often routing through the same cloud platforms that also sell the raw compute underneath. If anything, as millions of new creators start building software, the demand for platforms that make scaling effortless goes up, not down. Neon—a serverless Postgres platform—already reports that 80% of its databases are created by AI agents, not humans. That’s a leading indicator of where this layer is heading.

Stripe‘s positioning is particularly interesting. Their Agentic Commerce Protocol (ACP), co-developed with OpenAI, is building the payment rails for agent-mediated transactions. Google‘s Universal Commerce Protocol (UCP)—open-sourced in January 2026—takes a complementary approach, providing a standard for how agents discover, evaluate, and transact with merchants. McKinsey estimates that AI agents could mediate $3 to $5 trillion in global consumer commerce by 2030. When an agent completes a purchase on your behalf, these protocols determine how the transaction flows—bridging traditional commerce infrastructure and the emerging agent economy.

Shopify represents another dimension: commerce infrastructure that agents can navigate. As I explored in The Agentic Web, when discovery, evaluation, and purchase collapse into a single agentic flow, the platforms that expose their catalogs to agents will capture disproportionate value.

Vector databases like Pinecone and Weaviate represent an entirely new subcategory—infrastructure that didn’t exist five years ago but is now essential for retrieval-augmented generation, the pattern that grounds agents in real data rather than hallucination. Databricks and Snowflake provide the data platforms where enterprises organize the information that agents need—and Snowflake’s Cortex Agents are blurring the line between data platform and agent runtime.

On the enterprise side, ServiceNow AI Agents represent the incumbent bet: that the companies already embedded in enterprise workflows have a natural advantage when those workflows become agentic. Salesforce‘s Agentforce makes the same play from the CRM angle.

What’s happening: Supabase has become the default backend for vibe-coded applications. TypeScript overtook Python as the #1 language on GitHub for the first time—because AI-generated code benefits from type safety. The platforms that thrive will be the ones whose value increases with every new creator who builds on them. Composability creates network effects, and network effects create moats.

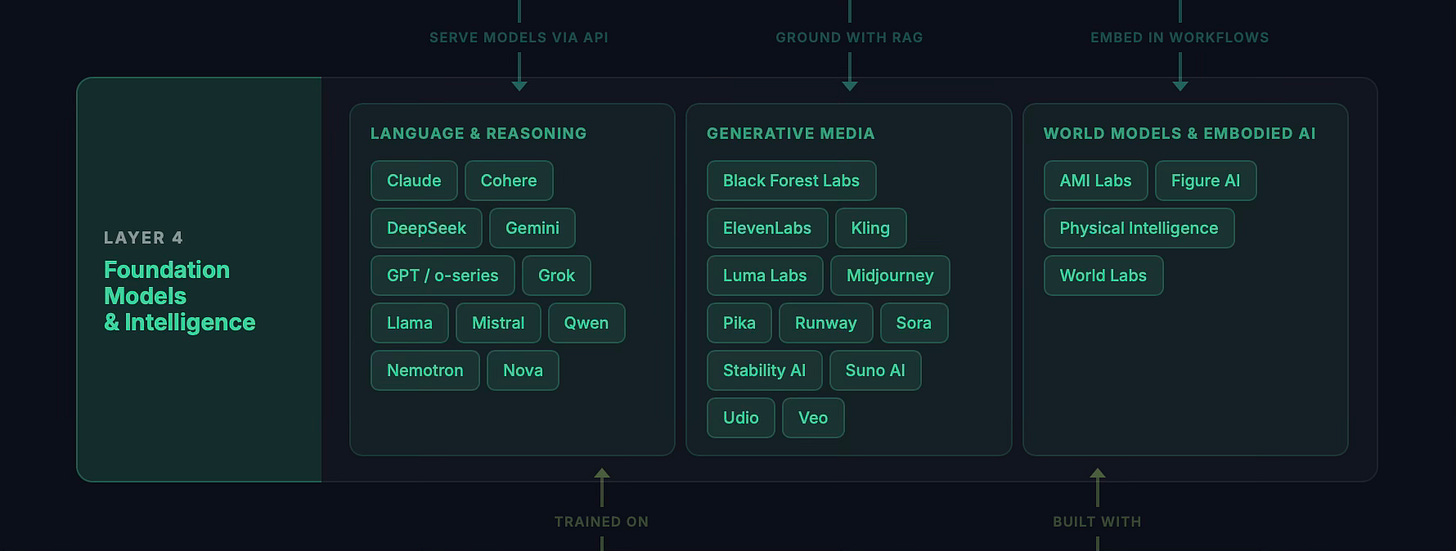

Layer 4: Foundation Models & Intelligence

This is the layer that made everything above it possible. Foundation models are the engines of the agentic economy: the systems that understand language, generate media, reason about code, and increasingly, take sustained autonomous action.

The landscape divides into three distinct territories.

Language and reasoning models are the backbone. Claude, GPT / o-series, Gemini, Grok, Llama, Mistral, DeepSeek, and Qwen—the competition here is fierce and the pace of improvement is staggering. Claude Opus 4.5 hit 80.9% on SWE-Bench Verified, up from 33% eighteen months ago. On GPQA Diamond—PhD-level scientific reasoning—Claude Opus 4.6 scored 91.3%, exceeding human experts by over 21 points. METR’s benchmark shows autonomous task horizons crossing a full work-day at 14.5 hours, doubling every 123 days. At that rate, week-long autonomous tasks arrive by late 2026.

But perhaps the most consequential shift is economic: AI inference costs dropped 92% in three years, from $30 per million tokens to $0.10–$2.50. When I wrote about the Direct from Imagination era, I described a future where people would speak entire worlds into existence. The enabling condition wasn’t just model intelligence—it was model economics. At $30 per million tokens, agentic workflows are a luxury. At $0.10, they’re table stakes.

Generative media models represent the creative frontier. Midjourney, Runway, Black Forest Labs (creators of Flux), Sora, Veo, ElevenLabs, Suno AI, and Stability AI are collectively doing to media production what LLMs are doing to code. Kling (from Kuaishou) represents the Chinese frontier in video generation.

World models and embodied AI represent the emerging third territory, where models learn to understand and interact with the physical world. AMI Labs—Yann LeCun’s new venture, which raised a record-setting $1.03 billion seed round—is building world models using JEPA (Joint Embedding Predictive Architecture), a fundamentally different approach to learning abstract representations of physical reality. World Labs, founded by Fei-Fei Li, is pushing into 3D world generation. Figure AI and Physical Intelligence are connecting models to physical actuators. This is where the agentic economy eventually meets atoms—and where Tesla‘s investment in Full Self-Driving and Optimus is a bet not just on transportation but on the physical manifestation of agent intelligence.

The open-source vs. proprietary tension at this layer is real and productive. Meta‘s Llama, DeepSeek, and Mistral are democratizing access—mirroring the decentralization tension I identified in the metaverse value-chain. The question of who controls the intelligence is the most consequential structural question of the agentic economy.

What’s happening: Cohere is pursuing the enterprise-focused path, optimizing for retrieval and search rather than general reasoning. The frontier labs push capability boundaries that open-source trails by months, not years. The result is a competitive dynamic that benefits everyone building on top.

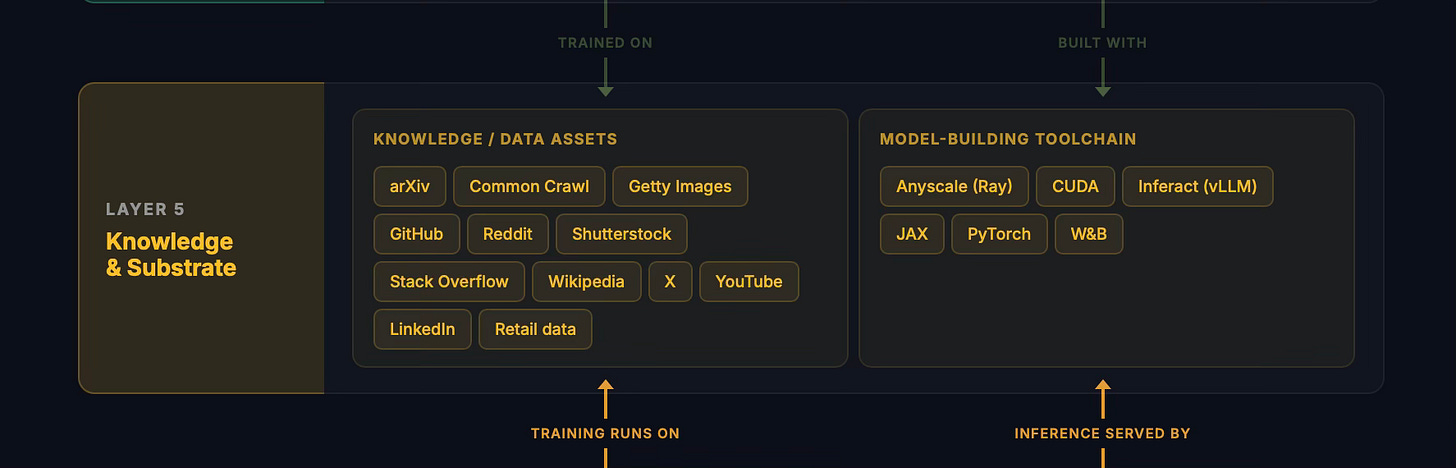

Layer 5: Knowledge & Substrate

Models are only as good as what they learn from. Layer 5 is the knowledge substrate of the agentic economy: the data assets that models train on, the model-building toolchain that enables that training, and the platforms where human knowledge accumulates.

This layer has a unique property: it’s simultaneously the most valuable and the most contested. The companies that control proprietary data at scale have an asset that becomes more valuable as models become more capable.

X is perhaps the most strategically interesting asset here—not as a social network, but as a real-time data firehose. When xAI acquired Twitter, they didn’t just buy a social platform. They bought the internet’s most comprehensive real-time knowledge graph: every news event, every public conversation, every expert opinion, timestamped and linked. That data is now feeding Grok’s training pipeline directly, giving xAI a data advantage that no amount of web crawling can replicate.

YouTube (Google) and GitHub (Microsoft) represent similar strategic assets. YouTube’s transcripts are disproportionately powerful for model training—research shows that 7-billion-parameter models trained on high-quality YouTube transcripts outperform 72-billion-parameter models with lower-quality data. GitHub is the world’s largest repository of code, and Microsoft’s ownership of it provides an unmatched advantage in code generation.

The model-building toolchain at this layer—PyTorch (Meta), JAX (Google), CUDA (NVIDIA)—are the compilers of the AI era. They translate mathematical intent into the parallel computations that produce intelligence. PyTorch has become the de facto standard for research; CUDA remains the gravitational center of GPU computing. These frameworks sit at the intersection of data and compute—the tooling that transforms raw knowledge into trained models.

What’s happening: Data licensing has become a multi-billion-dollar market. Reddit went public partly on the strength of its data licensing deals with AI companies. The question of who owns training data—and who profits from it—is reshaping copyright law, journalism, and the economics of content creation.

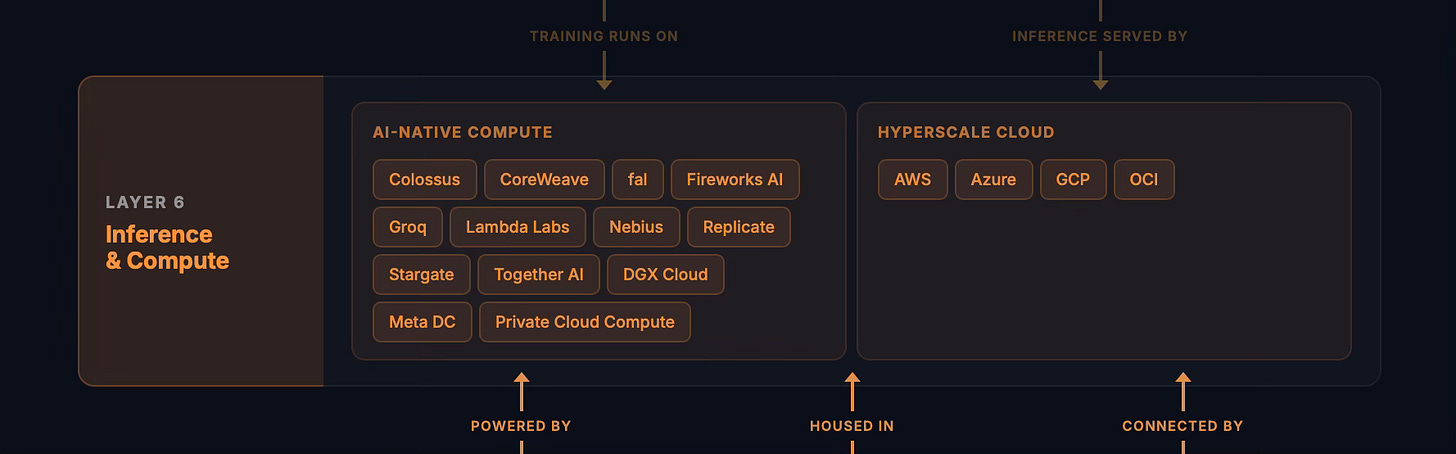

Layer 6: Inference & Compute

Training a model requires enormous compute. Running it—inference—requires a different kind of enormous compute: lower latency, higher throughput, and increasingly, the ability to sustain long-running agentic sessions that last hours or days.

The inference economy is emerging as a sector distinct from training. a16z’s analysis points in this direction: custom ASICs already handle 40% of inference workloads, and companies like Together AI grew from $30 million to $300 million ARR in a single year. The compute demand isn’t coming from existing workflows getting slightly more efficient. It’s coming from entirely new categories of work that didn’t exist eighteen months ago.

This layer splits into two territories. AI-native compute providers like CoreWeave, Groq (with its LPU architecture optimized for inference speed), Lambda Labs, and Fireworks AI are purpose-built for the workloads the agentic economy generates. xAI‘s Colossus cluster—one of the world’s largest AI supercomputers—and OpenAI‘s Stargate project ($500 billion committed, the largest infrastructure investment since the Interstate Highway System) represent the frontier of what’s being built.

Hyperscale cloud providers—AWS (Amazon), Azure (Microsoft), GCP (Google), and OCI (Oracle)—are the established infrastructure that most enterprises route their AI workloads through. They’re responding to AI-native competitors by building out GPU clusters, offering inference-optimized instances, and integrating foundation models directly into their platforms.

The emerging risk is what you might call inference famine: demand for inference compute is growing faster than supply can scale, even at these unprecedented investment levels. As agents become capable of sustained multi-hour autonomous sessions and agentic workflows chain dozens of model calls per task, the compute footprint per user is exploding. The bottleneck is shifting from training—which is expensive but episodic—to inference, which is continuous and compounding.

What’s happening: Big Tech alone is committing over $700 billion in AI capex in 2026: Amazon at $200 billion, Google at $180 billion, Meta at $115–135 billion, Microsoft at $80 billion. That’s not software spending—it’s industrial-scale infrastructure investment, more comparable to building railroads or power grids than to launching software products.

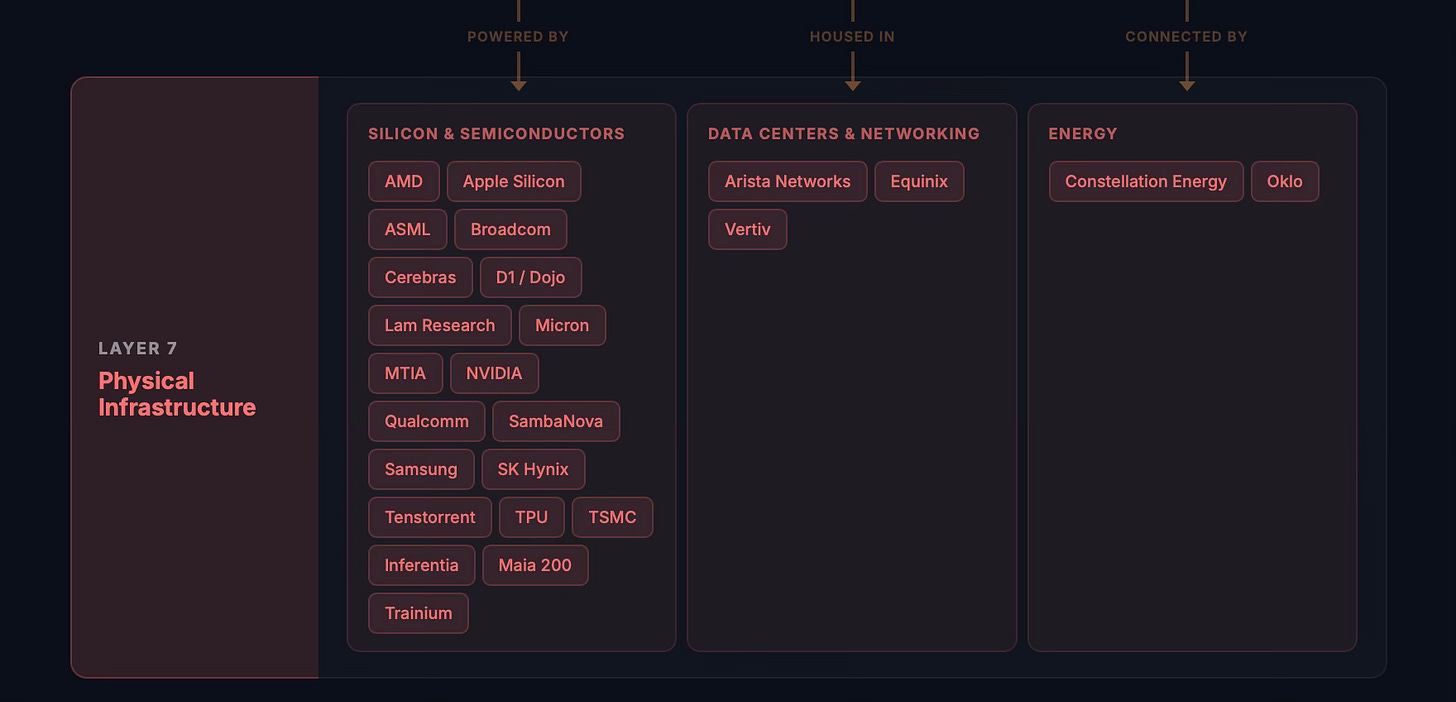

Layer 7: Physical Infrastructure

Everything above depends on atoms. Silicon chips, networking equipment, data centers, cooling systems, and the energy to power all of it.

When I wrote the Metaverse Value-Chain, infrastructure was the foundation of the whole stack. That has never been more literally true—and the stakes have never been higher. Global data center power consumption is projected to hit 96 gigawatts in 2026: the equivalent of nine New York Cities. The grid investment required—$720 billion—rivals the AI capex itself.

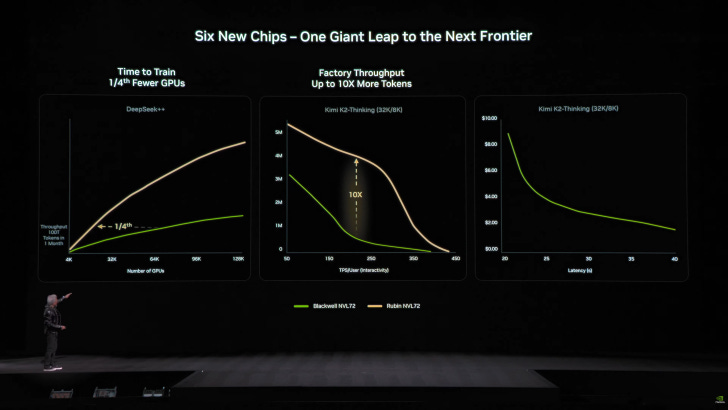

NVIDIA occupies a singular position at the center of this layer—and, increasingly, well beyond it. Jensen Huang’s team has demonstrated what some call “Huang’s Law”: since 2012, actual AI compute has improved 300,000x, compared to the 7x that Moore’s Law would have predicted. The Rubin chip (Q2 2026) promises 5x inference performance over Blackwell. But NVIDIA’s ambitions now extend far up the stack: NeMo and NIM provide agent development and inference microservices (Layer 2–3), Nemotron delivers open foundation models optimized for agentic AI (Layer 4), CUDA remains the gravitational center of GPU computing (Layer 5), and DGX Cloud offers managed compute (Layer 6). NVIDIA is becoming a full-stack AI platform company, not just a chipmaker. But it isn’t alone at Layer 7: AMD, Cerebras (wafer-scale computing), Tenstorrent (Jim Keller’s RISC-V AI chips), and SambaNova are all pursuing alternative architectures. The hyperscalers are building their own silicon too: Google‘s TPUs, Meta‘s MTIA, Microsoft‘s Maia 200 (a custom inference ASIC built on TSMC’s 3nm process), Apple Silicon, and Tesla‘s D1/Dojo chips.

Behind the chip designers sit the manufacturers and equipment makers that make fabrication possible. TSMC fabricates the vast majority of the world’s most advanced chips—making it arguably the single most critical company in the entire agentic economy. ASML’s EUV lithography machines, which cost over $350 million each, are the only equipment on Earth capable of printing at the cutting-edge nodes AI chips require. This is one of the most concentrated dependencies in the global technology stack: a single Dutch company’s machines are the bottleneck for the entire AI hardware supply chain.

And underneath everything: energy. Constellation Energy restarted Three Mile Island specifically to power a Microsoft data center. Oklo, backed by Sam Altman, is developing small modular nuclear reactors designed for data center power. The constraint on AI isn’t software anymore. It’s atoms.

Comparing the Titans of the Agentic Economy

A layered framework is only useful if it reveals something you can’t see without it. The real test: take companies that look similar from the outside and show how they occupy the layers in fundamentally different ways.

Anthropic occupies three layers with remarkable focus. Claude at Layer 1 (as one of the two leading frontier agents), Claude Code and MCP at Layer 2, and the Claude model family at Layer 4. Anthropic’s strategy is depth over breadth. It doesn’t own data, compute, or silicon—it relies on partners (primarily Amazon and Google for cloud). This is a deliberate bet that model quality and developer ecosystem can win without vertical integration. With MCP winning the protocol adoption race and 4% of GitHub commits authored by Claude Code, the bet is paying off.

OpenAI is the most aggressive at vertical expansion. ChatGPT at Layer 1. Codex at Layer 2. The Agentic Commerce Protocol (ACP), co-developed with Stripe, at Layer 3—building the payment and transaction rails that agents use to buy things on your behalf. GPT and o-series models plus Sora at Layer 4. The Stargate project at Layer 6 represents a $500 billion bet on owning compute infrastructure. OpenAI is trying to be vertically integrated from models to infrastructure—a strategy that requires enormous capital but creates enormous leverage if it works.

Google rivals only Amazon for the most comprehensive layer coverage in the agentic economy. Gemini at Layers 1 and 4. A2A and ADK at Layer 2. The Universal Commerce Protocol (UCP), Firebase, and Google Workspace APIs at Layer 3—Gmail, Calendar, and Drive are already default integration targets for agentic code, and UCP is positioning Google at the center of agentic commerce. YouTube at Layer 5—the single most valuable training data asset on the internet. GCP at Layer 6. TPUs at Layer 7. Google’s advantage is breadth: they have meaningful presence at every single layer. Their challenge is that being everywhere means competing with specialists at every layer simultaneously. But in a world where data is the ultimate moat, owning YouTube may matter more than any other single asset.

Meta and xAI make an instructive pair, because both own massive social platforms that feed proprietary data into their models — but they’re deploying that advantage in opposite ways.

Meta’s data advantage comes from Facebook, Instagram, and WhatsApp: billions of users generating text, images, video, and social graph data across multiple modalities. That data trains Llama, which Meta then open-sources. This is the most distinctive strategic move in the agentic economy: Meta is the only frontier lab releasing fully open-weight models. Llama at Layer 4 is the most widely-deployed open-source model family, and PyTorch at Layer 5 is the de facto standard for ML research. Meta is also making one of the largest infrastructure bets in the industry: with $115–135 billion in projected 2026 capex and 21+ owned data centers, Meta’s Layer 6 presence is substantial—even if it’s less branded than Stargate or Colossus. MTIA at Layer 7 reduces their dependence on NVIDIA. The bet is that commoditizing the model layer through open source concentrates value in the places where Meta has unique advantages: its social graph, its data, and its ability to deploy AI across platforms with billions of users. It’s the same strategic logic that led them to open-source React — commoditize the complement, and draw an entire ecosystem toward your orbit.

xAI’s data comes from X: a different kind of asset. Where Meta’s data is social and multimodal, X is the internet’s densest real-time knowledge graph: every news event, every public conversation, every expert opinion, timestamped and linked. That data feeds Grok’s training pipeline directly, optimizing for timeliness and public discourse rather than the social and visual signals Meta captures. Grok at Layers 1 and 4. Colossus, one of the world’s largest GPU clusters, is a substantial Layer 6 investment. Through Tesla, there’s a connection to Layer 7 (Dojo chips) and to embodied AI (Full Self-Driving, Optimus). Where Meta open-sources to commoditize, xAI vertically integrates to control: data, compute, and models in a closed flywheel, with a distribution platform on top. It’s the most vertically ambitious play in the space.

Microsoft plays the game differently: it’s the picks-and-shovels provider wrapped in enterprise distribution. Copilot at Layer 1. GitHub Copilot and Agent Framework at Layer 2. Azure’s platform services and Dynamics 365 at Layer 3—Azure isn’t just compute; its PaaS offerings, managed databases, and app services make it one of the largest agentic deployment platforms. GitHub and LinkedIn at Layer 5—the world’s largest code repository and the world’s largest professional knowledge graph, respectively. Azure at Layer 6. Maia 200—Microsoft’s custom inference ASIC, built on TSMC’s 3nm process—at Layer 7. Microsoft’s $13 billion investment in OpenAI gives it access to frontier models without building them. The question isn’t whether enterprises will adopt AI agents—it’s whether they’ll adopt them through the Microsoft stack they’re already paying for. Every Fortune 500 company already runs on Microsoft. That distribution advantage is hard to overstate.

Amazon is the quiet giant of the agentic economy—present at every single layer, but often as the infrastructure behind someone else’s headline. Alexa at Layer 1, now upgraded with generative AI capabilities, is the world’s most widely-deployed voice agent—present in hundreds of millions of devices. Nova Act, a browser automation agent launched on AWS, extends Amazon into task-oriented agent territory. Bedrock AgentCore and the Strand SDK at Layer 2 provide managed services for building, deploying, and operating agents at enterprise scale. AWS at Layer 3 is the world’s largest cloud platform—Lambda, S3, DynamoDB, and API Gateway are the default infrastructure that agentic applications deploy to, with a $244 billion revenue backlog as of early 2026. The Amazon Nova model family at Layer 4 gives Amazon its own frontier models, reducing dependence on partners (though its $4+ billion investment in Anthropic hedges that bet). Amazon’s retail data at Layer 5—the world’s largest product catalog, consumer purchase behavior, and logistics data—is an asset whose value for agentic commerce is hard to overstate. AWS at Layer 6 represents the single largest infrastructure commitment in the industry: $200 billion in 2026 capex, with Project Rainier operating the world’s largest AI training cluster built on custom silicon. And at Layer 7, Trainium (training), Inferentia (inference), and Graviton5 (general compute) chips give Amazon a silicon strategy rivaling any hyperscaler. Amazon’s strategic position is unique: it’s simultaneously the infrastructure partner for multiple frontier labs—including Anthropic—and an increasingly direct competitor to them.

Apple looked like the conspicuous underperformer in early versions of this framework—but a closer look reveals a more deliberate strategy. Apple Intelligence at Layer 1 is behind the frontier in raw model capability, but Apple is playing a different game. Xcode 26 brought AI-assisted coding to Apple’s developer platform at Layer 2, integrating third-party models like Claude and ChatGPT directly into the IDE—with agentic coding capabilities arriving in Xcode 26.3. At Layer 3, the App Intents framework is Apple’s quiet power play: by letting any app expose its capabilities to Apple Intelligence and Siri, Apple is turning its entire ecosystem into a structured service layer that agents can navigate. Apple Pay adds transactional infrastructure. Apple Private Cloud Compute at Layer 6—running on custom Apple Silicon servers with a privacy-first architecture—provides the inference backbone, backed by a $500–600 billion commitment to US infrastructure. And Apple Silicon at Layer 7 remains the world’s most advanced edge AI chip. Apple controls the device that two billion people carry in their pockets. As I wrote in Enshittification and the Future of AI Agents, to ensure that agents have our interests in mind, we’ll want to preserve our freedom to select and parameterize them however we choose. Device-based LLMs are central to that vision, and Apple controls more of the device layer than anyone.

NVIDIA spans six layers—and it’s not the company most people expect to see across that much of the stack. GPUs at Layer 7 are where NVIDIA’s dominance is obvious: Blackwell and the upcoming Rubin architecture are the physical substrate of AI. But NVIDIA has been quietly building upward through the entire stack. DGX Cloud at Layer 6 offers managed compute for training and inference. CUDA at Layer 5 remains the gravitational center of GPU computing—the substrate that every other layer depends on. Nemotron, NVIDIA’s family of open models optimized for agentic AI, occupies Layer 4. NIM microservices provide inference deployment infrastructure at Layer 3. And NeMo—NVIDIA’s agent development toolkit, along with the NeMo Claw open-source agent platform announced at GTC 2026—positions them at Layer 2. NVIDIA is becoming a full-stack AI platform company. The strategic logic is clear: if you make the silicon that everything runs on, you have a natural advantage in optimizing every layer above it. Whether the market accepts NVIDIA as a software platform company—not just a chipmaker—is one of the most consequential bets in the space.

The pattern that emerges is instructive. These companies aren’t building the same thing at all. They’re placing bets on which layers of the stack will capture the most value, and the specifics of those bets reflect deeply different visions of how the agentic economy will evolve.

The Composability Thesis

If there’s a single principle that governs how value flows through these seven layers, it’s composability.

I’ve written about how composability is the most powerful creative force in the universe: how in biology, culture, software, and finance, the ability to aggregate, transmit, and iterate components produces emergent creativity that no single actor could achieve alone. The agentic economy is the most composable system humanity has ever built.

Consider the stack in action. A user describes what they want in natural language (Layer 1). An agent orchestrated through MCP discovers the right tools and services (Layer 2). Those services—databases, payment processors, deployment platforms—handle the hard infrastructure problems (Layer 3). The agent’s intelligence comes from foundation models (Layer 4) trained on the accumulated knowledge of the internet (Layer 5), running on specialized compute (Layer 6) powered by cutting-edge silicon and energy (Layer 7).

Every layer is modular. Every layer is replaceable. You can swap Claude for Gemini at Layer 4. You can swap AWS for CoreWeave at Layer 6. You can swap MongoDB for Neon at Layer 3. The interfaces between layers—MCP, A2A, REST APIs—enable recombination without requiring any single company to own the whole stack. This is precisely the architecture that produces maximum emergent creativity: interoperable primitives rather than monolithic solutions.

The network effects compound. There are now over 17,000 MCP servers. That follows Reed’s Law, where network value grows as 2^n with subgroup formation, not just Metcalfe’s n². Every new tool connected to the network makes every agent more capable. Every new agent on the network makes every tool more valuable. The degree to which a network facilitates interconnections determines the extent of its emergent creativity, innovation, and wealth. Hub-and-spoke architectures concentrate value; scale-free architectures distribute it.

This is why I think the open web has a structural advantage—though it’s an advantage, not a certainty. As I argued in The Agentic Web, agents prefer open protocols because they enable composition. The web is the only substrate flexible enough to serve as both the canvas and the runtime for dynamic creation. The agentic web doesn’t just serve information—it synthesizes, composes, and acts.

Walled gardens will persist at certain layers—Apple’s device ecosystem, Meta’s social graph, xAI’s data firehose—and their staying power shouldn’t be underestimated. But if history is any guide, the overall trajectory favors composability over control. The most powerful systems tend to emerge from interoperable primitives, not monolithic solutions. That’s the lesson from biology, from the internet’s own architecture, and from every creative industry I’ve studied for the past decade. The question isn’t whether open systems win everywhere; it’s which layers remain open and which close up around whoever builds the strongest gravity well.

What Comes Next

The disruption is happening to software itself: the substrate beneath everything in technology. And it’s happening through agents that are learning to collaborate, to compose, to improve their own tools, and to form emergent societies that we’re only beginning to understand.

The seven layers I’ve mapped here aren’t static. They’ll shift, subdivide, and recombine. New layers may emerge. Some companies will leap across layers in ways we can’t yet predict. The comparison of frontier companies I’ve drawn will look different in twelve months.

But the underlying dynamics will persist. Value flows upward from atoms to intelligence to agency. The companies that control scarce resources at the lower layers—TSMC’s fabrication, NVIDIA’s architecture, the hyperscalers’ data center footprints—have structural advantages that are hard to replicate. The companies that build the most composable, developer-friendly platforms in the middle layers will capture the network effects of the Creator Era. And the companies that best understand how to project human will through agents—the ones that collapse the gap between intention and implementation—will define the experience layer.

38% of startups are now solo-founded, up from 22%. Smaller teams accomplish what previously required hundreds. The Creator Era is producing an explosion of new participants, new forms, and new economic opportunity—exactly the pattern that plays out in every creative industry when the barriers to creation drop below the threshold of mainstream access.

When I spoke at MIT about projecting our will through intelligent agents, I was describing a future that seemed far away… But the time has already arrived. The seven layers of the agentic economy are the map of how it works.

The bottleneck isn’t engineering anymore. It’s imagination.

The full interactive infographic of The Seven Layers of the Agentic Economy is available on metavert.io.

Further Reading

My earlier work on frameworks and market maps:

Software’s Creator Era Has Arrived — Pioneer, Engineering, Creator and the SaaSpocalypse

The State of AI Agents in 2026 — 200+ slides of research on where agents stand today

The Agentic Web: Discovery, Commerce, and Creation — When answers become applications

The Direct from Imagination Era Has Begun — Speaking worlds into existence

Software, Heal Thyself: Self-Improving Code — The strange loop where agents debug the tools they depend on

This market map seems really neat! Also shows exactly how crowded it is! Whether it is too many or too few is left as an exercise to the reader …